How Secure are Apache & IIS Web Servers after Vulnerability Testing?

Info: 10610 words (42 pages) Dissertation

Published: 15th Nov 2021

Click to expand Table of Content

Contents

Introduction

Dissertation Background

Software Implementation

Review – Aims and Objectives

Progress to Date

Tools & technology:

Objectives

Motivation

Codes and Policies

Literature Review

Introduction

Background

Open Source and Proprietary Software

Web Technologies

Business Logic

Data Access Logic

Modules in Apache

Kestrel

Microsoft Windows and UNIX

Current Technologies

Apache HTTP Server 2.4

IIS 10.0 Express

Gopher

WAIS

Introduction

Systematic Approach

Introduction

Security

Web Applications

Web Application Scanner

Description of the Problem Domain

Methodology

IIS

Apache

Results Analysis

Sampling and Data Collection

Exploitation & Post-Exploitation

Intelligence Gathering

Reporting

Threat Modelling

Qualitative Approach

Design

Development / Implementation

Testing

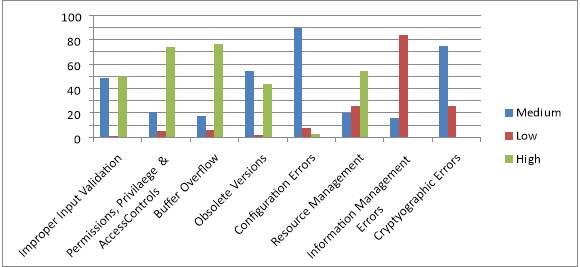

Results by level of severity

Figure 4. Representation of categories of vulnerabilities by level of severity

Conclusion

Bibliography

References

Appendices

Introduction

Kumar (2015) has expressed his opinion that the Web Server is a crucial role within web-based applications. Apache Web Server is constructed at the edge of the network one of the most vulnerable services to attack. Having default configuration supply much sensitive information which may help hacker to prepare for an attack the web server.

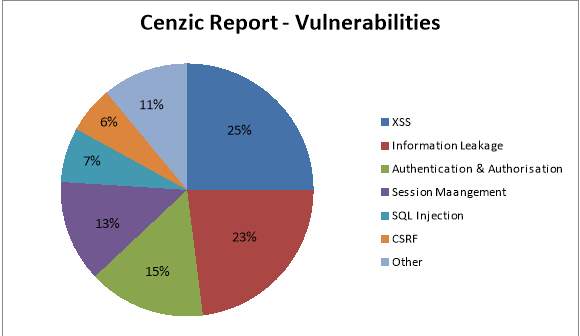

The majority of web application attacks are through XSS, Info Leakage, Session Management and PHP Injection attacks which are due to weak programming code and failure to sanitize web application infrastructure. According to the security vendor Cenzic, 96% of tested applications have vulnerabilities. Below is a chart from Cenzic with vulnerable types of the trend found?

Dissertation Background

The purpose of this dissertation is to allow for a the methodical approach in issues surrounding security, and by targeting their vulnerabilities over the internet to valuable knowledge of ‘How Secure are Apache and IIS web servers’ after Vulnerability Testing.

Paquet (2013) regards the Internet as open nature and for businesses to pay attention to the security of their networks. “Companies need to move their business functions to the public network by ensuring that the data cannot be compromised and that the data is not accessible to anyone who is not authorized”. Note that vulnerability assessment is different from risk assessments, as Miessler (2015) states that a vulnerability assessment is a technical assessment designed to yield as many vulnerabilities as possible in an environment, along with severity and remediation priority information. On the other hand Risk assessments, such as, threat models are complicated because of how they’re understood and how they’re carried out. A risk assessment at the highest level should involve determining level of acceptable risk by measuring the current risk level, and then determining any mismatches.

Software Implementation

Apache is as a software implementation that uses open source software to run web servers where implementation has the critical role of running through all procedures concerned with the HTTP server, which is the most popular one in operational use today.

However, Schaefer (2016) declares that the most current source of IIS software is the release of the IIS 7.0. Internet Information Server (IIS), being noted as a configurable application platform that performs tasks within the operating system and used to host and provide Internet-based services to ASP.NET and ASP Web applications. When a request comes from client to server IIS takes that request from users and process it and send response back to users; has its own ASP.NET Process Engine to handle the ASP.NET request. The way you configure an ASP.NET application depends on what version of IIS the application is running on.

Helmke (2014) on the other hand relates his opinion to Apache; needed to support your host dynamically, by use of mod_vhost_alias. The mod_vhost_alias is a virtual entity vital to Internet Services providers (ISPs) on multiple hosts. He also states that Apache has a built-in virtual host that can be very effective within the web server. Schaefer, (2016) says that a web server is responsible for providing a response to requests that come from users. The basic application services are used by an HTTP Server, as well as providing the added benefit of internet services, such as, Gopher and WAIS

Aims and Objectives

The project aims and objectives are to provide a solution based on whether implementing penetration testing tools; downloaded from third-party software can have the least issues by evaluating how we can test either of two web servers, such as Apache and IIS. The idea is to use reconnaissance techniques from tools recommended by White Hackers and case studies researched for this particular type of project.

Any tests carried should verify if an attacker is using malicious intent for exploration. The following have been described below:

- Perform broad scans to assess areas of exposure and services through entry points

- Perform targeted scans and exploited by weak vulnerabilities

- Rank vulnerabilities based on threat level

- Identify the consequences and recommend solutions

- Test identified components to gain access to:

The current state of software security, according to Curphey (2016) stated that both the tools available for any weaknesses can be linked to four categories of assessment application based tools, such as, source code analysers, web application scanners, database scanners, binary analysing tools. The most commonly used are source code analysers and web application scanners.

An application known as Web application vulnerability scanner can have the added benefit for recording how the application tool works on targeting web based attacks. Unfortunately, Maynor (2012) implies that typical scanners use third party makers that scan the vulnerability and attempts to find ways in exploitation; having an effect on the issue being verified. This is essentially because launching an exploit can cause reliability concerns; achieved through evaluating “what conditions” must be met. The web application vulnerability tool would be the one used in generating reports for this dissertation because there is a need for performing assessments by continuing to make improvements. By using this tool it would indicate the best solutions upon reduce security threats.

Progress to Date

Apart from establishing viable sources and contacts, one approach is the scanning of the environment by network scanning, by generatiing reports against your collected assessment data; critical to your vulnerability assessment program. By providing the right data to the right people are the key to a successful effort, such as the scan reports that will be produced. Reporting this would allow you to filter and customize the vulnerability details for a particular scan or set of scans. Rutherford (2017) has indicated that it very much depends on what you are trying to achieve. If you have Windows servers available for use then using IIS would make more sense as this is natively supported and in fact has added features for running a website from a Windows server as opposed to the Apache server. If on the other hand you have a Linux server then Apache all the way. Some other questions to ask when making the decisions are based on Programming Language. If using ASP-NET, it would make IIS, the one to benefit by convent conversations through, programming language. However it could result in the Authentication. IIS has Windows integrated authentication that can handle user authentication against AD quite easily.

Tools & technology

Tools & technology used:

Acunetix, Nessus, Metasploit and NMap

Apache & IIS web server software

Testing Environment: LITS Network (College)

Objectives

The objectives are to produce a comprehensive view of all operating systems and services running and available on the network, as well as detecting non configured devices in network such as misconfigured web server, firewall. It can detect critical vulnerabilities, such as the vulnerable web servers in the network.

Motivation

Jhala (2014) states that for motivational purposes the computer system is more like hardware and software because it incorporates the policies and procedures where a majority is unutilized. Security holes can emerge from numerous ranges. When an attacker has found a way into computing system, he takes advantage of lapses in security to gain unauthorized access to the system, technology, or management in the form of failures which can violate the security policy being exploited by the vulnerability. The following objectives are vital because it protects network devices, the reduction in the vulnerability of applications and end-systems originating from the network. These motivation techniques can be vital in protecting information throughout any transmission connected to a network.

This mechanism is called “Network Security” which takes measures to reduce the susceptibility of a network to threats. To ensure network security, numerous open-source as well as commercial products are available.

Codes and Policies

Resnik (2015) has argued that codes, policies, and principals are very important and useful, as a bunch of rules, because they do not cover every situation. It can result in conflict with the college network, all requiring considerable interpretation purely based on my reflections of the dissertation so far it’s been meticulously involved with research, through case studies provide a better understanding of how to go about making the right choices when adhering to the use of Ethical hacking rules and regulation protocols.

In Appendix A I have submitted a reformed project plan with some minor adjustments to the tools that would benefit in a more advanced process, to undertake and gather the best results for my conclusion, this may change as technology is always advancing, involving new regulations, theories, different solutions, and or new ways to exploit a system.

Through the resources I have found, they seem to be quite appealing, as they have aided me with vital knowledge towards my choice of topic. It will need a solution, with adjustments to the timescale already identified in Task Scheduler, Gantt Chart & Critical Path Analysis in Appendix B.

On reflection to my timescale this may have bearing on my dissertation workload, due the term time I have left for my studying. I have would seriously have to consider which of the Apache or IIS web server is the most vulnerable.

Therefore I would concentrate on my Literature Review, as stated previously. It would now require a much needed approach to my proposed project by making sure I reference and point out the ways in which I can at least decide the critical course of action needed, based on the security issues one faces with web server technology. It will take a serious amount of testing to be done, in order to have a clearer picture in “What is the best web server” after exploration. I have managed to my workload well so far? I believe my writing style and methods are improving all the time, with a little guidance from my tutor(s).

Literature Review

Introduction

There is a rapid evolution of information technologies that regard information as the most important asset; though information systems that use, store and transmit data, for instance, Apache or IIs web servers. There seems to be a contrast between web server and information technologies processes; and their methodologies because there is a need for keeping information confidential, available, and to assure integrity. Unsecured storage, access, and propagation of information expose any web server vulnerable to hackers’ attack, resulting in a loss in terms of both revenue and reputation.

Background

Open Source and Proprietary Software

In terms of the web servers considered here, a key distinction is that Apache is open source software, whereas Microsoft IIS (for Internet Information Services) is proprietary. The priority is to make sure the software source code of open source software is in the public domain. This means anyone can compile, link and modify it for their requirements.

The software source code of software is protected by various mechanisms such as company confidentiality and copyright. This has a purpose because the only way to change is through the company that produces it. Software Production for Apache and IIS, has four stages of production process:

- Software design – takes the original idea through a specification to a structured design.

- Source coding – this implements the design in a high level language such as C++.

- Compilation – conversion of the high level language into the low level machine code for a specific computer processor.

- Linking – coordination of the machine code with precompiled libraries of code that have not required rewriting

Web Technologies

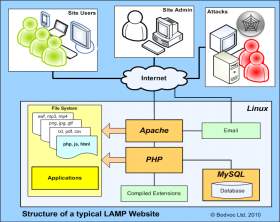

This section provides an insight into Web server technologies, such as Apache that incorporates a web server solution called Linux/Apache/MySQL/PHP (LAMP). This is openly free and accommodates your web publishing needs from the OS down to the scripting language. However, Internet Information Services (IIS) is a product that operates in Windows OS, regulated by Microsoft. IIS has the ability to host internet services on the web browser using the HTTP server.

Business Logic

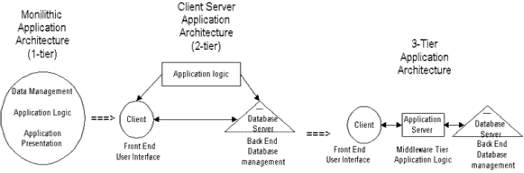

Business logic is one mechanism that makes use of the referred application server. It forms part of a back-end web Server that provides the HTML pages and contains the logic corresponding to the application. It acts as a client to the database server that provides the data access logic satisfactory.

Data Access Logic

Data access logic is there to provide the storage, retrieval, and modification of data. This verifies the integrity data. It can be used in relational to a database management system (RDBMS), a server to the web Server. Web servers transmit data between the tiers conducted from the Web Client, to the Web Server, to the RDBMS. A client communicates with the middle tier using standard communication protocols such as TCP/IP.

The middle responds through the tier interfaces to the backend of (RDBMS) using standard database protocols independent of the RDBMS. The middle tier will use message switching and can effectively adhere to the business rules of the application. It acts upon the clients’

Hodgkins (2017) states that web browsers work by connecting over the Internet via modem or ISDN via a server or ISP to remote machines, asking for a particular page and then formatting the documents they receive for viewing on a computer by using a special language called HTTP (Hyper Text Transfer Protocol) module. Lakshmiraghavan (2013) argues that with regards to the Http module, an ASP is a pipeline over the network within what Mitchell (2004) refers to as a HTTP module. HTTP modules can be configured to run in response to events that are requested from an ASP.NET resource. An HTTP handler responds by rendering a particular resource, you are actively writing a HTTP handler. This is because when the HTML portion of an ASP.NET web page gets dynamically compiled at run time, on the (System Web.UI.Page), can be deemed a HTTP handler implementation (Mitchell, (2014).

Modules in Apache

Modules provide a solution to define the use of scripting language that can effectively include the full-title methodology automatically available in all views in (Appendix 4).

Askit, (2012) states that the following methods require the booking implementation, known as Locked () to request information from a class of Sync-Mail-System (imp-Sync-MS) to proceed with a message to secure the threads executed by class History-Mail. Sync-Mail overrides by adding 14 methods of synchronisation constraints, two of which can perform the Lock () and unlock technique. In addition, the process continues to repeat individual classes of Sync-Mail and Sync-Receiver on the interface using 5 methods of the interface. The interface requires certain behavioural methods to cross-cut these for multiple use handling file name extensions. (Appendix 1) – Evaluation of composition data when adding synchronisation)

Microsoft (2017) therefore reminds us that for developers custom handlers perform special handling that use file name extensions in your application. For example, if a developer created a handler that created RSS-formatted XML, you could bind the .rises file name extension in your application to the custom handler. Developers can also create handlers that map to a specific file and can implement these handlers as native modules or as implementations of the ASP.NET IHttpHandler interface on the ASP.Net core part of the system.

In relation to Apache and IIS, Dykstra (2016) states that there are two new ASP.NET Core applications that run in-process with an HTTP server implementation. This is because the server implementation listens for HTTP requests and surfaces them to the application as sets of request features composed into http content.

ASP.NET Core ships two server implementations:

- Kestrel is a cross-platform HTTP server based on cross-platform asynchronous I/O library.

- Web Listener is a Windows-only HTTP server based on the Http.Sys kernel driver.

Kestrel

Kestrel is the web server by default in ASP.NET Core templates. If your application accepts requests only from an internal network, you can use Kestrel by itself.

+

+

If you expose your application to the Internet, you must use IIS, Nginx, or Apache as a reverse proxy server. A reverse proxy server receives HTTP requests from the Internet and forwards them to Kestrel after some preliminary handling, as shown in the following diagram.

APPSec (2017) argue that Web services allow application interfaces through “consumer” applications. It is all built on a wide variety of technologies to easily talk with each other. Web services host on an internal network, especially accessible to mobile applications, have been exposed to the Internet. He also suggests that securing these services is essential.

- Preparation – Consulting third party verifies that it has received the following information from the customer in preparation for the penetration test.

- Exploration – Consulting third party manually explores the web service to verify that all methods can be called successfully and to gain the functionality and sensitivity of a web service. Because of Baseline requests for each transaction.

- Automated Vulnerability Scanning – Commercial scanning tools are used to scan the web service. This includes an authenticated application-level scan as well as an infrastructure-level scan. Custom scripts are written if needed to supplement the scan due to dynamically adding digital signature upon request).

- Manual Penetration Testing – The web service is manually tested by experienced web application security professionals using AppSec Consultant’s systematic testing process. This manual testing process covers all major aspects of web application security that would apply to a web service.

Microsoft Windows and UNIX

There are key distinctions between Microsoft IIS and Apache through their operating system backgrounds and requirements because Microsoft IIS runs only on Windows. It works with NT4.0, 2000, Sever 2003, XP Professional and various versions of Vista. Windows is commercial software based on a graphical user interface and the windows-icons-mouse pointer (WIMP) concept. However, as endemic commercial software, Windows has technical limitations and security problems. A good basis for a commercial grade web server is Windows XP Professional and Microsoft IIS.

Apache therefore was designed to run on UNIX systems. UNIX includes software production tools by default, working on any version.

Fundamentally, this is a text-based operating system containing a number of graphical front-ends available for both end use and system administration. UNIX is production based due to having a strong security model and more changeability than Windows. It is by and large more stable in use, and more secure. A good basis for a web server is Linux and Apache. Apache is the most popular web server software.

Current Technologies

Apache HTTP Server 2.4

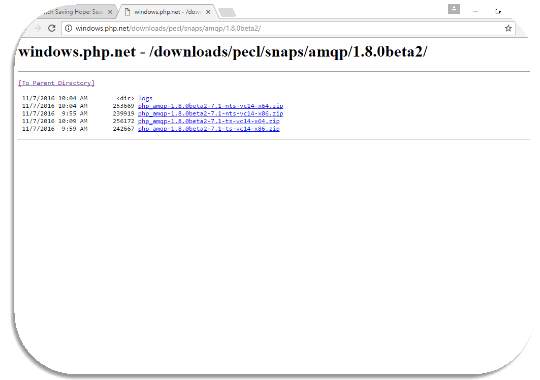

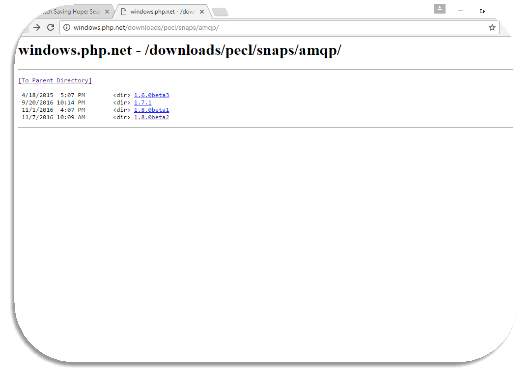

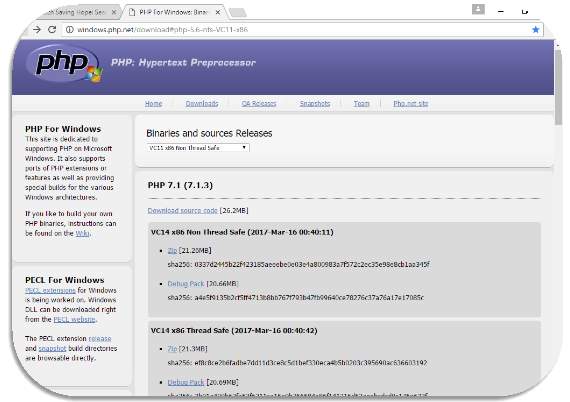

The latest HTTP server solution is described by Apache.org, (2016) as the Apache HTTP Server 2.4 and comprises some core/module enhancements, such as Run-time Load MPMs, Event MPM, and Asynchronous support, per module and per-directory configurations, Pre request configurations and Override configuration/Reduced memory usage. According to Upguard.com, (2016) this open sourced software application allows Apache to be freely distributed through an open source license with the benefit of tweaking performance. Rouse (2015) Apache can be implemented on many operating systems, such as Linux, Solaris, Digital UNIX, and AIX), on other UNIX/POSIX-derived systems such as Rhapsody, BeOS, and BS2000/OSD), on Amiga OS, and on Windows 2000, although it is mainly used on Linux. Apache can work together with Linux to a combination of MYSQL and PHP scripting language, the combined solution called LAMP Web server (Appendix 2)

IIS 10.0 Express

Microsoft.com, (2016), recommend a new version of IIS, known as 10.0 Express, a free self-contained solution to test and develop websites, also has additional benefits, such as, the server can work with your production server on the development computer. IIS Express will work with VS 2010 and Visual Web Developer 2010 Express, will run on Windows XP and higher systems, does not require an administrator account, and does not require any code changes to use. You will be able to take advantage of it with all types of ASP.NET applications, and it enables you to develop using a full IIS 7.x feature-set. IIS Express runs on Windows Service Pack 1 and later versions.

IIS.net, (2016), have confirmed that Microsoft released an updated File Transfer Protocol (FTP) service available as a separate download for Windows Server 2008 and many other server clients by providing a robust, secure solution in a Windows environment. This FTP service was written specifically for Windows Server 2008 and enables Web authors to publish content more easily and securely than before, and offers both Web administrators better integration, management, authentication and logging features.

Schaefer, (2016) declares that the most current source of IIS software is the release of the IIS 7.0 which manages your configurations more efficiently based on 3 aspects, common factors of which are modularity, granularity, and interoperability. These factors are manually constructed on HTTP Server, as well as the added benefit of internet services, such as, Gopher and WAIS, that are described by Schaefer, (2016), as a configurable application platform that performs tasks within the operating system.

Gopher

Techtarget.com, (2016) have established that Gopher is part of a client-server structure, where it allows users the comfort of searching and retrieving text information from other Gopher servers interface. The servers can store vital information, namely documents, articles and programs through the menus on such interface to links on other documents.

WAIS

TechTarget (2016) say that is this is applicable to a specialised database, which can effectively make use of the internet services through multiple locations and logged via a directory of servers in one location, accessible from users by the Wide Area Information Servers (WAIS). The user can search for an answer to their query to a selected database, and then can connect to all servers on the distributed network.

Introduction

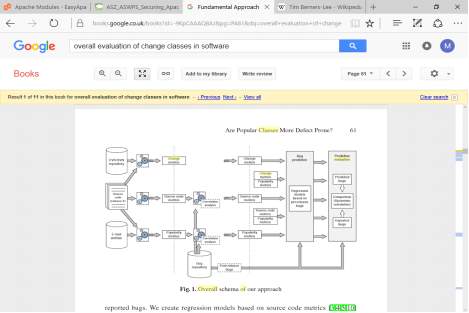

The section provides systematic prediction approach by Taentzer (2016) to knowing the location of software defects that allow many project managers the ability to solve problematic components. Performing these defect predictions can be challenging, however with the right approach researchers have used software code metrics to combat this problem.

Systematic Approach

Rosenblum et. al, (2010) have researched this particular aspect and decided to an inspection of software defects; by predicting any bugs to improve correlated metrics. To perform this approach, the data is extracted from an email associated by the source code and then by evaluatiing gives you the change in metrics. Rosenblum et. al, (2010) extracted defect data from known repository issues, such as quantify popularity of the metrics, using baseline collaboration between source code metrics and software defects. They also use independent variables and numbers to evaluate post release defects from Spearman’s correlation model schema of results in (Appendix 3.) By reporting the bugs, a regression stage happens to determine the source code (34510) metrics based on that model to code (78) that can enrich and improve measurement of popularity within computing all the codes, a change in metrics, called process metrics were extracted. Rosenblum et. al, (2010), also declare that by versioning system log files (CVS and SVN) they can be measured by how the code was developed within a subset. Pathname, and the Class path.

Introduction

The web-based enterprise commonly represents the most public interface of the network. They consist of offline or internal network web applications, which can very likely come into contact users for whom the application was intended. A security breach can result in bad publicity for an organisation, potentially damaging its image in the face of the public. Security must be a priority, but failure to enforce can be both very serious, and very public.

Security

Saarinen (2017 has noticed that many of machines have recently been infected with NSA’s Double Pulsar malware. Double Pulsar is a backdoor that’s installed after an exposed and vulnerable Windows box is compromised using the Eternalblue exploit. There is another threat to our systems called ‘Hajime. This is a new vigilante worm aimed at defending against Mirai. While Walker (2017) Curiously, believes that this particular threat originates from white-hat hackers, executed using a Mirai botnet which can coordinate IoT attacks. It uses the masses of devices accumulated to overwhelm web servers with artificial traffic.

However, Pettit (2017) has discovered another web attack called Hajime. Hajime was discovered back in October 2016 and also travels the Internet in search of vulnerable IoT products. Most notably, Hajime uses a peer-to-peer network to communicate with its controller. Compared with Mirai’s hardcoded command and control server, Hajime’s peer-to-peer system makes it harder to be shutdown. Hajime’s creator pushes command signals into the peer network. They’re then incrementally pushed to connected devices.

Dede (2014) states that the most common vulnerabilities starts with an exploited Injection in the top 10 known security issues put out by Open Web Application Security Project (OWASP) and continues to be a major source of concern. Due to the environment for these types of applications, an attacker can manipulate a string using specific Structured Query Language (SQL) commands. These strings can be move in places like search boxes, login forms, and even directly into a URL to enter client-side exposing the page itself. Baker (2016) suggests that OWASP have reported that “untrusted data can be an interpreter, sent as part of a command or query.” It allows an attacker unauthorized access to data within a database through a web application to alter pre-existing data. The web application uses the user input to directly insert it into a database query without any type of sanitization.

Symantec (2016) believe that from the nature of media, comes the inevitable scenario that we as a society are becoming finally aware of how serious security can be ineffective within software. Appendix 3 gives a report based upon Symantec’s latest “Internet Security Report” (Symantec 2016), covering a six month period from July 2016, to December 2016 in (Appendix 7).

According to Ernst (2015), he says German computer security expert Ralph Langner regards anther seriuous threat “Stuxnet as a weapon, but it’s not ‘like’ a weapon”. Langner was the first person to identify how the virus worked. “It is a weapon because it was designed to cause havoc”. Therefore, The Dailymail.co.uk (2016) made a report; that online security experts Kaspersky Labs have known that three hi-tech cyber weapons damaged Iran’s Bushehr nuclear plant.

Web Applications

Shiflett (2004) suggests that there are known routine attacks to web applications such as: spoofing, cross-site scripting, SQL injection, session fixation and hijacking. Howard and LeBlanc [2003. pp: 366-4, also states that there could be established representation attacks, in which the severity of attacks can range from violation of private information and data – or identity theft – by manipulating data (particularly with SQL injection), and even hijacking a host machine, this is because web applications can be deployed in the public domain progressing attacks publicly, and are subject to vulnerabilities within an enterprise network. Donaldson. et .al, (2015) suggests that security is a must for protecting an enterprise network, from any intrusion, because it examines the data travelling over the network, to any users connected to it. Therefore, the architecture uses its defences to channel attacker activity, by forearming the sensors and defensive mechanisms. It effectively reduces any weaknesses and vulnerabilities.

Web Application Scanner

An application known as Web application vulnerability scanner can have the added benefit of recording how the application tool works on targeting web based attacks. Unfortunately this can be less effective and requires improvement.

The web application vulnerability tool would be the one used in generating reports for this dissertation will be Nessus because there is a need for performing assessments by continuing to make improvements. By using this tool it would indicate the best solutions upon the reduction of security threats/vulnerabilities.

Description of the Problem Domain

Kerner (2015) highlights that an Apache web server flaw has a cause for concern due to the Apache Struts CVE-2017-5638 vulnerability patch. The flaw in the form of remote code execution vulnerability attaches to the web development framework, which affects 25 components. One component in particular, an SQL Injection flaw in Oracle E-Business Suite identified as CVE-2017-3549 “The code comprises an SQL statement containing strings that can be altered by an attacker,” It has also been reported of another; CVE-2017-3547 which is a Carriage Return Line Feed (CRLF) vulnerability in Oracle People Soft. Alexander Polyakov, CTO at ERPScan said that this vulnerability lets an attacker exploit a variety of attacks, such as cross-site scripting, hijacking of web pages, and defacement. IIS on the other hand can

Methodology

IIS

The best mindful approach to IIS is to organize the precautions set in command code, into categories: -Patches and update, IIS lockdown, Services, Protocols.

The IIS lockdown tools help you to automate certain security steps. IIS lockdown critically reduce the vulnerability of a windows 2000 web server because it allows you to pick a specific type of server role, and then use customer templates to improve security for that particular server.

Apache

Results Analysis

The findings show that web applications and perimeter servers are vulnerable to high and medium security vulnerabilities. Given the fact that web application and perimeter network devices remain vulnerable to attack can have consequencs? Almost half of the web applications scanned contained a high security vulnerability such as XSS or SQL Injection. The chart shows that administrators are better geared to protect against Network Vulnerabilities, however the stats are not reassuring at all. There was a 10% rise when the Apache and IIS servers were scanned. These two web applications where vulnerable to high security risks with 50% having a Medium security vulnerability.

Sampling and Data Collection

Data would be collated in the form of generated reports for each of the scanning tools mentioned above. It will show how valuable the research is by given solutions to the problems faced with the vulnerabilities in the Apache and IIS web server. Any reports gathered would be generated by software penetration tools, e.g. Acunetic, Metasploit, Burp and Nessus.

There are other steps that would be required to gather the most suitable form of penetration testing through Exploitation, Intelligence, Reporting and Threat modelling.

Exploitation & Post-Exploitation

Penetration testing methodology is the penetration testing process of testing a computer or system for an exploitable vulnerability attack; itself being allowed access to your web server systems; because of continually working to avoid detection, but can also by use “privilege escalation” to the system of potential assets.

As the penetration testing methodology progresses to post-exploitation; the target goes through the machine or entry point and determines whether it could be further exploited for later use.

Intelligence Gathering

In this phase of your penetration testing methodology, there are preliminary of planning the attack. In a properly planned pen-test, the provider will have a clear idea of what is off limits and what is fair game.

Your provider is not doing their job if there is critical data information about your business, its employees, its assets and its liabilities. As such, the time spent on this step of the penetration testing methodology can be quite extensive.

Establishing ground rules is important in your penetration testing methodology to discovering information however they can—even if that means searching through the company personal data.

Reporting

The need for a thorough penetration testing methodology involves a great deal of work in data collection, analysis and exploitation. Here are some considerations:

- Get Specifics: High-level recommendations may provide a basic context for the problems with your web applications, but they aren’t always very helpful to the people charged with implementation.

- Walk-Through: The learning experience can provide vital knowledge to show relevant scenarios and also how difficult it was to accomplish.

- Risk Level: A detailed report on the risk level of the vulnerabilities they encountered, as well as an assessment of the potential business impact if they are exploited.

Threat Modelling

Once relevant documentation has been gathered, the next step of the penetration testing is to target primary and secondary assets determined and further scrutinized.

Assets could entail a variety of different elements, including organizational data (e.g., policies, procedures), employee and customer data and “human assets”—high-level employees that could be exploited in a manner of ways.

Qualitative Approach

The case study report would reflect upon a qualitative approach giving a biased view based on other technician’s work, such as, the countless ways through exploiting web servers, and providing a new conclusion on varied ways security can be controlled better within the Blackpool & Fylde College environment. It involves the use of client software and downloaded applications of web servers Apache and IIS.

All collated information would be stored in DROPBOX for safe retrieval afterwards.

Design

- NESSA: Topology diagrams using Cisco symbols with keys, annotations and descriptions

Development / Implementation

- NESSA: Snippets of raw data output from monitoring software / configuration information

Testing

Pylot is a free open source tool for testing performance and scalability of web services. It runs HTTP load tests, which are useful for capacity planning, benchmarking, analysis, and system tuning. Pylot generates concurrent load (HTTP Requests), verifies server responses, and produces reports with metrics. Tests suites are executed and monitored from a GUI or shell/console. It supports HTTP and HTTPS. It is multi-threaded and generates real time stats. Response is verified with regular expressions. GUI and Console mode support available. The following are ways to test the environment as follows: –

Stress testing

The purpose of web server stress testing is to find the target application’s crash point. The crash point is not always an error message or access violation. It can be a perceptible slowdown in the request processing the performance level limitations of applications for using available software that determines the robustness, availability, and error handling under a heavy load. Stress testing is an important issue because of the different types of software available. Stress tests commonly put a greater emphasis on robustness.

The purpose of web server stress testing is to find the target application’s crash point. The crash point is not always an error message or access violation. It can be a perceptible slowdown in the request processing.

Scalability testing

It focuses on the performance of your websites, software, hardware and application at all the stages from minimum to maximum load. Scalability Testing is the ability of a network, system or a process to continue to function well, when changes are done in size or volume of the system to meet growing need. It is a type of non-functional testing. Pylot is a free open source tool for testing performance and scalability of web services. It runs HTTP load tests, which are useful for capacity planning, benchmarking, analysis, and system tuning.

It supports HTTP and HTTPS. It is multi-threaded and generates real time stats. Response is verified with regular expressions. GUI and Console mode support available.

Load testing

- Load testing focuses on testing an application under heavy loads, to determine at what point the system response time fails.

- It would be appropriate to include a completed test log in appendices with reference to it in this section and a summary of key aspects. The test log should include specificity in inputs, expected outputs, actual outputs and any amendments / evidence.

Results by level of severity

The criteria placed on the of web server technology based on the CVSS v2 defined by standards of severity

Figure 4. Representation of categories of vulnerabilities by level of severity

| Type of Vulnerablity | Configuration Errors | Information Leakage on Metadata | Information on Management Errors | Improper Validation Errors | Cryptographic Issues | Permission, Privileges & Access Controls | ||

Conclusions

Apache was developed to allow web server operation on computers with the Unix operating system. Hence, it began as a highly technical program, not even available for Windows. This is still reflected, for example, in the absence of default web-based administration tools. In contrast, IIS grew from the Windows operating system. As such, it had limited functionality in early versions, but all the accessibility and ease of use implied by its basis in the Windows OS. Since its inception, additional functionality has become available, to the extent that Microsoft now supplies a cut-down Personal Web Server as a way to share information at a peer-to-peer level in organisations, with IIS as its flagship web server software.

www.Acunetix.com (2015) has further suggested that web application vulnerabilities have increased more over time than network vulnerabilities. Vulnerabilities from Cyber attacks have risen dramatically, and confirms that many organizations are still failing to develop secure software and patch vulnerabilities, some of which have a low barrier of entry, so remaining vulnerable to common attacks. Cross-site Scripting and SQL Injection, the two most well-known methods of web app attack, remain two of the most common vulnerabilities detected in a large percentage of the scans. Most concerning is that many scans found the main superbugs of 2014 have not been patched, especially POODLE.

Conclusion

Mitchell, (2016) recognizes that generally speaking Apache is the most preferred Web server designed for (HTTP) within a Linux environment. In the environment are supported plug-in modules mentioned previously containing features that include a full range of CGI, SSL, and virtual domains. Where as Microsoft (2016) states that by all accounts IIS is still a free self-contained solution to test and develop websites, also has additional benefits, such as, the server can work with your production server on the development computer.

In addition to web servers Dr. Miller (2015) postulates that he found countless vulnerability in a number of Apple products, including their laptops and phones. Perhaps the most notorious of these issues was the ability to remotely compromise an iPhone by merely sending it a malicious text message. He also has the privilege of having been the first to remotely exploit the iPhone when it was released as well as the first Android phone when it was released (on the day it came out). After that, he began focusing on embedded security and has done research in the fields of laptop battery security as well as near field communications (NFC) of cellular phones.

“The scale and breadth of hacks we’ve seen in 2016 show that governments and companies must do more to safeguard these essential digital rights. 2017 must be the year to change things”. Guardian (2016)

In order to provide effective advice for companies on the appropriate web server technology to use, the following three central questions must be answered:

- What are the issues regarding the deployment of an Apache or IIS web server in an enterprise environment?

- Does it matter about cost/free of charge a difference between software, bearing upon performance and security issues?

- What other types of implantations are there?

- What other potential threats can organisation’s aspect to see in 2017?

Bibliography

[1] Helmke, Matthew. Ubuntu Unleashed 2016 Edition. 1st ed. [Place of publication not identified]: Sams, 2015. Print.

Paquet, C. (2017). Network Security Concepts and Policies > Building Blocks of Information Security. [online] Ciscopress.com. Available at: http://www.ciscopress.com/articles/article.asp?p=1998559 [Accessed 20 Apr. 2017].

Helmke, M. (2015). Ubuntu Unleashed 2016 Edition. 1st ed. [Place of publication not identified]: Sams.

A. rights (no date) Apache vs. IIS – difference and comparison. Available at: http://www.diffen.com/difference/apache_vs_iis (Accessed: 31 January 2017).

Available at: http://america.aljazeera.com/watch/shows/america-tonight/articles/2015/2/5/blackenergy-malware-cyberwarfare.html (Accessed: 31 January 2017).

Baker (2016) 14 years of SQL injection and still the most dangerous vulnerability. Available at: https://www.netsparker.com/blog/web-security/sql-injection-vulnerability-history/ (Accessed: 31 January 2017).

Bell, B. (2001) Security strengths and weaknesses of two popular web servers. Available at: https://www.sans.org/reading-room/whitepapers/webservers/security-strengths-weaknesses-popular-web-servers-293 (Accessed: 31 January 2017).

Bologna, S. and Bucci, G. (2013) Achieving quality in software: Proceedings of the third international… Available at: https://books.google.co.uk/books?id=fcPUBwAAQBAJ&pg=PA126&dq=why+does+evaluation+give+the+source+code+a+change+in+metrics&hl=en&sa=X&redir_esc=y#v=onepage&q=whydoesevaluationgivethesourc code=false (Accessed: 23 February 2017).

Khoshbin, A. (2017). Educational Information Security Laboratories. [online] Available at: http://ltu.diva-portal.org/smash/get/diva2:1046184/FULLTEXT03.pdf [Accessed 20 Apr. 2017].

Consulting, A. (2017) Penetration testing. Available at: https://www.appsecconsulting.com/security-testing/penetration-testing/web-services-penetration-testing (Accessed: 31 January 2017).

David Charles (2016) Zero days – security leaks for sale (2015) | top documentary film HD. Available at: https://www.youtube.com/watch?v=XpYTE8-PlZA (Accessed: 31 January 2017).

Dede, D. (2014) Are you under attack? Available at: https://blog.sucuri.net/2014/11/most-common-attacks-affecting-todays-websites.html (Accessed: 31 January 2017).

Grimes, R.A. (2011) Lesson from Apache flaw: Test everything. Available at: http://www.infoworld.com/article/2620721/application-security/lesson-from-apache-flaw–test-everything.html (Accessed: 31 January 2017).

Lee, R. (2016) Software engineering, artificial intelligence, networking and parallel… Available at: https://books.google.co.uk/books?id=TvggDAAAQBAJ&pg=PA19&dq=computing+codes+change+in+metrics&hl=en&sa=X&redir_esc=y#v=onepage&q=computing%20codes%20change%20in%20metrics&f=false (Accessed: 23 February 2017).

Lindros, K. and Tittel, E. (2014) How to choose the best vulnerability scanning tool for your business. Available at: http://www.cio.com/article/2683235/security0/how-to-choose-the-best-vulnerability-scanning-tool-for-your-business.html (Accessed: 31 January 2017).

Marketing, H. (2010) Which web server: IIS vs. Apache. Available at: http://www.hostway.com/blog/which-web-server-iis-vs-apache/ (Accessed: 31 January 2017).

Net-informations.com. (2017). What is IIS – Internet Information Server. [online] Available at: http://www.net-informations.com/faq/asp/iis.htm [Accessed 18 Apr. 2017].

Rouse, M. (2007) What is stress testing? – definition from WhatIs.Com. Available at: http://searchsoftwarequality.techtarget.com/definition/stress-testing (Accessed: 31 January 2017).

Saarinen, J. (2017). iTnews – For Australian Business. [online] iTnews. Available at: https://www.itnews.com.au/author/juha-saarinen-224495 [Accessed 24 Apr. 2017].

TED (2011) Ralph Langner: Cracking Stuxnet, a 21st-century cyber weapon. Available at: https://www.youtube.com/watch?v=CS01Hmjv1pQ (Accessed: 31 January 2017).Ernst, A. (2015) Is this the future of cyber warfare?

Up Guard (2017) IIS vs. Apache. Available at: https://www.upguard.com/articles/iis-apache (Accessed: 31 January 2017).

WALKER, J. (2017). ‘Vigilante’ worm fights Mirai botnet for control of your devices. [online] Digitaljournal.com. Available at: http://www.digitaljournal.com/tech-and-science/technology/vigilante-worm-fights-mirai-botnet-for-control-of-your-devices/article/490756#ixzz4f7e17Iho [Accessed 24 Apr. 2017].

Waugh, R. (2011b) Lethal Stuxnet cyber weapon is ‘just one of five’ engineered in same lab – and three have not been released yet. Available at: http://www.dailymail.co.uk/sciencetech/article-2079725/Lethal-Stuxnet-cyber-weapon-just-engineered-lab.html#ixzz4WEeblUuM (Accessed: 31 January 2017).

References

Performance, W. (2017b) The fastest Web server? Available at: http://www.webperformance.com/load-testing/blog/2011/11/what-is-the-fastest-webserver/ (Accessed: 31 January 2017).

Inc, S. (2017) Web server. Available at: https://www.scribd.com/presentation/2321136/Webserver (Accessed: 31 January 2017).

Stuttard, D. and Pinto, M. (2014) Home. Available at: https://danielmiessler.com/projects/webappsec_testing_resources/#gs.83bBPYY (Accessed: 31 January 2017).

http://www.hostway.com/blog/which-web-server-iis-vs-apache/

http://www.webperformance.com/load-testing/blog/2011/11/what-is-the-fastest-webserver/

http://www.diffen.com/difference/apache_vs_iis

http://news.netcraft.com/archives/2014/02/03/february-2014-web-server-survey.html

https://www.upguard.com/articles/iis-apache

https://news.netcraft.com/archives/2016/02/22/february-2016-web-server-survey.html

https://news.netcraft.com/archives/category/performance/

http://searchsoftwarequality.techtarget.com/definition/stress-testing

http://researchpedia.info/difference-between-linux-unix-and-windows/

http://researchpedia.info/difference-between-apache-and-iis/

https://www.youtube.com/watch?v=CS01Hmjv1pQ

Read more: http://www.dailymail.co.uk/sciencetech/article-2079725/Lethal-Stuxnet-cyber-weapon-just-engineered-lab.html#ixzz4WEeblUuM

Follow us: @Mail Online on Twitter | Daily Mail on Facebook

https://www.youtube.com/watch?v=XpYTE8-PlZA

[1] Mehmed Aksi (2012). Software Architectures and Component Technology. London: Springer. 385.

[2] Scott Donaldson. (2015). Network Security. In: Stanley Siegel, Chris K. Williams, Abdul Aslam Enterprise Cyber security: How to Build a Successful Cyber defence Program. London: Apress. 536.

Peter Guttmann (2007). Cryptographic Security Architecture: Design and Verification. London: Springer. 320

Alexander Pons. (2003). EVALUATION OF SERVER-SIDE TECHNOLOGY. N/A. 1 (1), 5.

Up guard. (2016). IIS vs. Apache. Available: https://www.upguard.com/articles/iis-apache. Last accessed 06/12/2016.

Timothy John Berners-Lee. (2016). Who is TimBL? Available: http://www.famousscientists.org/timothy-john-berners-lee/. Last accessed 06/12/2016

Appendices

Appendix 1 – Evaluation of composition data when adding synchronisation

Appendix 2. The middle and backend tiers can contain more than one Web Server and Data Server respectively, expanding to an n-tier design.

Appendix 3 – Structure of a LAMP Website.

| Module name | Apache version compatibility | Included in the following profiles by default | Description | |||

| 2.2 | 2.4 | Basic profile | Mod Ruid2 profile | MPM ITK profile | ||

| MPM Event | An experimental variant of the standard worker MPM | |||||

| MPM Prefork | Implements a non-threaded, pre-forking web server | |||||

| MPM Worker | Implements a hybrid multi-threaded multi-process web server | |||||

| MPM ITK | Implements a non-threaded web server that allows each Virtual Host to run under a separate UID and GID.

We introduced the MPM ITK option to servers that use Apache version 2.2 in the following versions:

|

|||||

Appendix (4) – Multi-processing modules (MPMs)

Appendix 5 – Overall schema of the approach

Appendix 6 – Internet Security Threat Report Internet Security Threat Report VOLUME 21,

APRIL 2016

Symantec Statistic Report

Attackers profit from flaws in browsers and website plug-in:

In 2015, the number of zero-day vulnerabilities discovered more than doubled to 54, a 125 percent increase from the year 2014.

Companies aren’t reporting the full extent breaches:

In 2015, we saw a record-setting total of nine mega-breaches, and the reported number of exposed identities jumped to 429 million.

Web administrators still struggle to stay current on patches:

There were over one million web attacks against people each day in 2015. Cybercriminals continue to take advantage of vulnerabilities in legitimate websites to infect users, because website administrators fail to secure their websites.

Cyber attackers are playing the long game:

In 2015, large businesses targeted for attack once was most likely to be targeted again at least three more times throughout the year. All businesses of all sizes are potentially vulnerable to targeted attacks

Appendix 7 – (Symantec 2016) covering a six month period from July to December.

Appendix 8

Appendix 9

Appendix 10

Appendix 11

Appendix 12 – PHP Hypertext Pre-processor

Microsoft. (2008). https://www.iis.net/learn/get-started/whats-new-in-iis-7/what39s-new-for-microsoft-and-ftp-in-iis-7. Available: https://www.iis.net/learn/get-started/whats-new-in-iis-7/what39s-new-for-microsoft-and-ftp-in-iis-7. Last accessed 06/12/2016.

Read more: Difference Between IIS and Apache | Difference Between http://www.differencebetween.net/technology/difference-between-iis-and-apache/#ixzz4O0j4gHcN

Professional IIS 7

By Kenneth Schaefer, Jeff Cochran, Scott Forsyth, Rob Baugh, Mike Everest, Dennis Glendenning

https://www.iis.net/learn/get-started/whats-new-in-iis-7/what39s-new-for-microsoft-and-ftp-in-iis-7

http://home.cern/topics/birth-web

Personal File Backup in Cloud

J. Perry1 and D. Yoon2 1,2CIS Department, University of Michigan, Dearborn, MI, USA

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allRelated Content

All TagsContent relating to: "Internet"

The Internet is a worldwide network that connects computers from around the world. Anybody with an Internet connection can interact and communicate with others from across the globe.

Related Articles

DMCA / Removal Request

If you are the original writer of this dissertation and no longer wish to have your work published on the UKDiss.com website then please: