A Quantum Computers the next Computing Revolution?

Info: 8781 words (35 pages) Dissertation

Published: 16th Dec 2019

Tagged: Computing

Quantum Computers – The next computing revolution?

Introduction

Computers are an integral and indispensable part of everyday life. Millennials and subsequent generations will know no other way of living other than with the help of a computer. Things that were impossible to imagine 50 years ago (on demand TV, online shopping, virtual reality, video calling and automated banking) are now commonplace connecting us all and making life easier, more comfortable and enjoyable than ever before. Indeed, the computers which have simplified our lives are incredibly sophisticated and the benefits they deliver have been so impressive and consistent that it is inconceivable to imagine a limit to what can be achieved.

Almost 50 years ago, Moore’s law correctly predicted that every two years computer processing power would double. So far, this theory has been borne out mainly because it has been possible to significantly reduce the size of transistors (semiconductors which control electronic signals in computers), with current generation CPUs featuring transistors smaller than the HIV virus.1 However, the industry is approaching the physical limits in reduction technology with transistors so minute electronic signals simply pass through them thus undermining their key function as switches. Reaching this point could potentially signal the end of the consistent growth in computing power which we have enjoyed for the past 40 years and the breaking point for Moore’s law bringing the computer industry to a crossroad – stagnation or revolution.

The physical limitation of microchips opens new doors for a whole new, different generation of computers. Perhaps we could see the creation of a new type of transistor, utilising new innovative materials or maybe we could see a fundamentally different type of computer system. The latter option is what is arguably the more exciting possibility as it opens the door for a massive amount of innovation. One of these exciting new systems is the quantum computer, a computer which even at base level operates completely differently to its ‘classical’ counterpart. A system which promises to be able to solve some of problems which even humanity’s fastest computers cannot solve.

This exciting new computer is going to be the core of my essay, where I investigate how this machine functions and the potential impact in areas all over our society which this computer could have. When discussing these subjects, I am going to be drawing constant comparisons to the classical computer, explaining the differences between the two and concluding whether the quantum computer will bring the same magnitude of change that the classical computer did. Whether or not quantum computers are going to be as revolutionary as the classical computer were.

Part I – Classical and Quantum Computers:

Beginning of the Classical Computer

To understand the significance of the quantum computer, it is important to first discuss the origins and basic building blocks of the computer we know and use today referred to herein as the ‘classical computer.’ The definition of a ‘computer’ is broad with the origins of the machine traced back to various points in history. The first computers were nothing more than mathematical instruments serving as giant mechanical calculators. Charles Babbage’s Difference Machine, for example, credited as the first automatic computing machine was conceptualised in 1822 2. Computing technology continued to slowly develop throughout the 19th Century with most designs operating mechanically. It was nearly 100 years later in the 1950s when more modern computing machines started to emerge. Although bearing little resemblance to the computers we have today, the premise behind how they worked was similar. These early computers used vacuum tubes as transistors to control the flow of electrons 3 and were some of the first digital programmable computers to hit the commercial market. The use of vacuum tube technology as switches was horribly energy inefficient and tubes often broke, not to mention the massive amount of physical space they required. Innovation was needed, and it soon followed; in 1971 a new revolution in computing began when Intel created the first commercially available microchip, the Intel 4004. It was announced as being “A New Era in Integrated Electronics” 4 which it truly was. This consumer ready technology paved the way to where we are now with computers, allowing for increasingly faster calculations on increasingly smaller and more energy efficient microchips.

A computer system is generally made up of the following parts: the central processing unit (‘CPU’ and the Intel 4004 for example), random access memory (known as RAM), a secondary storage device and a motherboard to allow the components to communicate with each other. At a base level, all ‘communications’ between the components of a classical computer are made in the computer language called Binary which is comprised, quite simply and remarkably, of two digits: 1 and 0. Binary was developed and is exclusively used by all classical computers because the digital nature of the language works perfectly with how electronics function inside the computer. The central processing unit in a modern computer is comprised of literally billions of transistors on microchips both of which are manufactured from silicon. A transistor regulates the flow of electrons and in a classical computer serves as an on/off switch when a high or low voltage flows through it5. This explains why computers use binary – transistors are only capable of being in two states, on or off just as binary only has two values, 0 and 1. In the industry, a single binary digit is referred to as a bit; 8 bits are a byte, 1000 bytes a kilobyte, 1 million, a megabyte and so on.

Transistors have evolved to function more than just switches and are combined to create logic gates using a form of algebraic logic known as Boolean in which all values are reduced to either TRUE or FALSE. Boolean functions use logic gates to represent the relationship between values, in this case binary’s 0s and 1s. An example logic gate is the AND gate which requires two high voltage inputs (2 1s in binary) to output a high voltage response (a single 1 in binary). Another example is the OR gate which only requires 1 high voltage input to give a high voltage output or the NOT gate which gives out a flipped response of what it originally seems. These logic gates use exceptionally simple logic but when combined can perform sophisticated mathematical functions such as addition or subtraction which can be combined further to perform even more complicated mathematical functions needed to operate every modern computer.

An important part of the computer commercial industry over the last half century has been Moore’s Law. Computer scientist Gordon Moore came up with his law in the 1970s and predicted that CPU speeds would double every two years and along with it the number of transistors on the computer chip.32 Moore’s prediction has turned out to be true and it has almost been a goal of manufacturers to adhere to his law. If we compare the first commercial microprocessor (the Intel 4004) to a modern-day microprocessor (the AMD Ryzen 7 1800X) the difference is astonishing. In 1971, the Intel 4004 released, featuring a clock speed of 740kHz 7 (740,000 cycles per second) using a 10,000-nanometre manufacturing process for its 2300 transistors 8. Fast-forward to the present day, Advanced Micro Devices’ (AMD’s) high end consumer CPU, the Ryzen 7 1800x features a maximum clock speed of 4.0 GHz 9 (4,000,000,000 cycles a second) and uses a 14-nanometre manufacturing process for its 4,200,000,000 transistors 10. In just under 50 years, there has been 1.8 million times increase in the number of transistors on a microchip with transistors roughly 710 times smaller.

The race for miniaturisation is still underway with an ever-increasing demand for more powerful computers. The indisputable fact is that there is an inverse relationship between computer power and transistor size: the more powerful a computer is more microscopic are the transistors crammed into its CPU. The 14 nanometre (nm) transistors inside our computers are unimaginably small, microscopic in fact. A human red blood cell is gigantic in comparison at around 7000 nm while the HIV virus is 90 nm and Porcine circovirus, one of the smallest viruses in 17 nm 11. The next leap in transistor size is expected this year (2018) when Intel’s next microchip architecture, Cannonlake, is expected to go into production with the smallest commercially available microchip at 10nm 12. While, the industry standard transistor, the MOSFET or Metal-Oxide-Semiconductor-Field-Effect-Transistor, is expected to continue to be miniaturized until 2026 where it will be 5.9 nanometres long 14. Exciting stuff, but is there a point where transistors simply can’t get any smaller?

Unsurprisingly, yes, there is a limit and despite the continued work in transistor reduction technology, the end is in sight. The reason for this however, is not due to a manufacturing limitation, the technology and tools are available but rather the limitation lies in the physical limitation of an operational transistor on this scale. There is a point with our current transistor technology where they will be so small and so close together that the electrons which are meant to pass through them to operate switches will experience quantum tunnelling, a leakage in electrons. The electrons will simply pass through the gates even if they are not meant to, undermining the intended function of transistors as there would be no ‘off’ state 13.

Current transistor reduction models assume we will find some replacement materials as part of the transistor formula to patch any ‘leaks’ which might appear 15. Even if we do keep the MOSFET transistor running at 5.9nm we will still need to find a whole new replacement. The continued shrinking of the MOSFET transistor is not sustainable and continuing to use this technology will mark the end of Moore’s Law. If we want to continue the growth in computing power which we have experienced a solution to this problem is needed. A radical but exciting alternative is the quantum computer.

The Beginning of the Quantum Computer

Although still in its very early days and considered by many as futuristic technology, the quantum computer operates differently to a modern computer. The thought of creating a quantum computer is said to be accredited to the world-renowned American theoretical physicist Richard Feynman almost 40 years ago. Feynman, a Nobel Prize winner, came up with the idea of adapting a computer to use quantum mechanics (a field of physics which describes the nature of particles at the smallest scale) to solve otherwise unsolvable problems 16. As discussed above classical computers use transistors, tiny switches which can either output a high or low voltage signal a strictly linear language. Quantum computers operate differently, instead of using bits (a 1 or a 0) to store and process information, quantum computers use qubits or quantum bits. The difference between these lies in the value which they hold, unlike a bit, a qubit can be either a 0, a 1 or anywhere in between, this is known as a superposition – radically different from a linear language quantum is multidimensional.

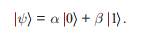

In quantum physics, a superposition is when a particle can be in two different states at the same time 18:

(19)

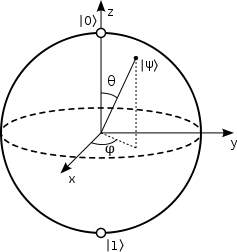

The ‘simple’ equation above shows, α and β as complex numbers representing the superposition and ψ is the overall quantum state 20. We cannot see, however, the exact quantum state which the qubit is in and instead when we go to measure the qubit it gives us a result of 0 or 1 21. The probability of each outcome can be found by squaring α and β where |α|2 chance will give 0 and a |β|2 chance will give 1. To represent a single qubit geometrically, a Bloch sphere is used 22:

(23)

With this, any state of a single qubit can be shown along the surface of this sphere. Any superposition between |0⟩ and |1⟩ will result in a vector between two being formed with θ being from 0° to 180°. The change in the angle ϕ (rotation around the Z axis) will result in the qubit changing its phase 23.Adding another component to the 1 qubit system would result in entanglement between the components of the system 25. Entanglement is a principle of quantum physics which says that some particles are linked together and measuring the quantum state of a particle determines the state of the other particle 26. The particles could be trillions of miles apart, inducing a change in one would result in an instant change in the other 27. This insane concept was described as “spooky action at a distance” by Albert Einstein28. This can be imagined in quantum computing as having a two-qubit entangled system and we know that if we go to measure one of the qubits we get the result of either a 0 or a 1. As these qubits are entangled we know with absolute certainty that the other is going to be the opposite of the one we measured. If we obtain the result 0 first, then we will get a 1 guaranteed on the next one 29. This correlation between the qubits continues to grow as we add more qubits, increasing by 2n power with n being the number of qubits 30. This means a 1000 qubit system could describe a system of 10301, a number that wouldn’t even fit in our universe with there being 1082 atoms in our universe 30. This shows the gigantic scale of information which can be contained in a quantum system and that it would leave any classical system behind in the dust.

The term ‘qubit’ is used to represent a mathematical unit of quantum information in the same way that a bit represents the units 1 or 0 in binary. As described above, binary works with transistor technology because transistors themselves have only two states: on or off. Whilst binary can be thought of as very practical and evident, qubits are very abstract. We think of binary as representing a simple switch in an electron circuit, but what would a qubit represent? Qubits can be thought as representing some sort of sub atomic particle. A particle so small that it can be subjected to the strange effects of quantum mechanics. As you could imagine dealing with a particle at this scale is an extreme challenge for scientists and engineers, with perfect conditions being required to operate the machine. An example of how a quantum computer would work would be using an ion trap which involves trapping ions by using laser spotlights then having it change state by using various laser pulses 31 – very different from the simple task of controlling the flow of electrons on a microchip. Other ways of creating and controlling quantum states would boil down to controlling and manipulating single electron spins 32. This, however, is no easy task and is the reason current quantum computers are huge hulking machines. But, in an interesting mirroring of history, current quantum computers are currently comparable in size to the mid-20th century classical computer that used vacuum tubes. As in the 1950s when transistor technology was in its infancy, it was only a matter of a few decades when silicon microchips replaced vacuum tube technology. The field of quantum computers is likely to see the same rate of advancement.

Part II – Impact of Computers:

As mentioned in the introduction, computers have touched almost everything in our lives. It is almost impossible to find a field that hasn’t been changed if not revolutionised by the introduction of the modern computer. With quantum computers offering a completely different and potentially more powerful way of functioning than that of classical computers the possibility of affordable, easy to use quantum computers are exciting and perhaps, like the classical computers before them, will be revolutionary. This part of the essay will explore various areas in society which could be affected by quantum computers, looking at how the area has been modernised by the classical computer and what quantum computers will do to change them and whether the change will be meaningful.

Medicine and Drugs:

There is no doubt about it that the invention of the computer has forever changed the field of medicine. Simple things such as our medical files being easily accessible by the medical profession or seamless communication between doctors and patients have had a massive impact, not to mention the more complex things such as robotic surgery or simulating new drugs instead of testing on animals. Computers play a vital role in modern medicine and have changed the field for the better, leading us to healthier lives. But, the medical world is not perfect and there are still problems which remain unsolvable by classical computers. An example of this is an optimisation – trying to solve a problem in the most effective way – balancing several variables to obtain the most efficient solution. This is a problem often found in radiotherapy, a common treatment for cancer. Before undergoing radiotherapy treatment, a doctor must carefully consider several factors, such as the dosage of radiation, level of intensity and the exact point where the dosage will be given considering the need to minimise the exposure of radiation to the patient. Advanced computer software is available to calculate the appropriate dosage but with so many parameters the software often falls short of calculating the ideal dosage.

This could be a problem that quantum computers can solve using an algorithm which would allow it to find the optimal radiation dose to destroy a patient’s cancerous cells whilst minimising the dosage of radiation they receive. At the Roswell Park Cancer Institute (a leader in cutting edge radiotherapy treatments based in Buffalo, New York in the US) has carried out a successful study with D-Wave, a quantum computer company, into the use of quantum computers in their radiotherapy treatments 33. Their findings suggest that quantum computers have the potential to make treating patients far more efficient and safer. A result that has huge personal consequences for the cancer patient and ultimately a cost savings for the National Health Service and the economy.

When it comes to developing new medicines, simulation is becoming increasingly more important. It allows scientists to conduct experiments at a reduced cost and at a fraction of the time. Simulating molecules requires an extreme amount computing power even for the world’s most powerful supercomputers which can only simulate molecules with a few hundred atoms. Quantum computers are already being put to the task and as of 13th September 2017 beryllium hydride (BeH2) became the largest molecule ever simulated on a quantum computer. Whilst this is no more than what a classical computer has been able to do it shows promise for the use of quantum computing in the development of new chemicals and drugs 34. Marco De Vivo, a theoretical chemist at the Italian Institute of Technology in Genoa described it as ‘like the first day we see a plane flying, and we want to go to the moon’.

The impact which classical computers have had on modern medicine and drug research is immeasurable. Almost every area of these two fields have been changed for the better, however there are still gaps, still things which cannot be done by even the most powerful computers. My research has led me to believe that quantum computers are going to be able to fill these holes, with them already proving to be useful in optimising radiotherapy treatment. I do not believe however that quantum computers are going to be as revolutionary as classical computers in the medical field. Classical computers have changed the medical field mostly by providing easily accessible information, a job which quantum computers cannot not much better than their counterpart. I do believe that in drug research quantum computers are going to be revolutionary, allowing us to discover new cures for disease that was thought to be incurable by running extremely accurate and complex simulations of new drugs to accelerate the time it takes to look for new cures and vaccines.

Encryption:

There is a sinister side to quantum computers that requires serious thought – that being the threat to encryption. Encryption is often something that we take for granted blindly assuming that our personal information stored on our computers and with companies we trust is safe against would be hackers. More of our information is stored online than ever before. Websites which we have been using for years such a Facebook, Amazon and Google have built up an in-depth profile about us information that can be used against us to our detriment. Recently, major institutions such as the NHS, Yahoo, Lloyds Bank and the US Democratic Party all experienced cyber-attacks highlighting the critical importance of safeguarding personal information stored on computers.

Both governments and companies are focused on finding more secure methods to protect information and are now dealing with the spectre the quantum computer. The power of the quantum computer may serve to empower the ability of hackers using advanced algorithms to break our modern encryption infrastructure.

One of the most common ways of encrypting data is by using the RSA cryptosystem. First discussed in 1977 by Ron Rivest, Adi Shamir and Leonard Adleman of the Massachusetts Institute of Technology, the cryptosystem involved using a private and a public key. The use of two different keys in this way is known as ‘asymmetrical encryption’ where the two keys are different yet mathematically similar. The public key (which can be used by anyone) is used to encrypt data and the private key is used to decrypt data, it can be thought of as a chest with two parts, where one part can only be opened with the public key and things can be placed in to it and the other half can only be opened with the private key where the contents can be retrieved. RSA also uses digital certificates which can be used to verify the user of the public key. What makes the RSA encryption system so strong is due to the extreme difficulty found when trying to factor (find the numbers which multiply together to make another number) a number which has been made from multiplying two large prime numbers.The longer the key the stronger the encryption. 1024 and 2048-bit keys are commonly used but there is a push to have 2048 as the standard to achieve an exponential increase in security 35. Digicert.com did the maths on how long it would take to crack a 2048-bit RSA key 36 using a 2.2 Ghz AMD Opteron processor with 2GB RAM and according to their calculations they said that “it would take 4,294,967,296 x 1.5 million years to break a DigiCert 2048-bit SSL certificate. Or, in other words, a little over 6.4 quadrillion years” Granted, this is last generation hardware but even using top of the line consumer hardware today the cracking time would not drastically change.

Whereas RSA was described as asymmetrical encryption, there is another form of encryption known as ‘symmetric encryption’. This encryption system only uses one ‘key’ which can be anything from a number or string of random letters. This single key is used by both users exchanging their data to encrypt and decrypt it 37. Advanced Encryption Standard (AES) is a very popular type of symmetric encryption created using the Rijndael algorithm, created by two Belgian cryptographers, Joan Daemen and Vincent Rijmen.38 As of today, these two encryption systems are generally secure and it is highly unlikely that someone would be able to crack them but, given the pace of the interest in quantum computers, one must ask how would these encryption systems stand against a quantum computer?

The two different types of encryption both have their own weakness against quantum computers. First symmetric encryption. This type of encryption is extremely weak against a quantum algorithm known as Shor’s Algorithm. This algorithm is used to find the prime factors of an integer at incredible speeds 39, perfect for breaking down an RSA key which as stated earlier is virtually impervious to attempts by classical computers because it is virtually impossible for a classical computer to factorise huge prime numbers. Asymmetric encryption also buckles under the power the quantum algorithm known as Grover’s Algorithm. This algorithm can provide a quadratic speed up in terms of how many searches it needs to do to find the correct solution. This algorithm was tested against a simple 64 bit key and according to betanews.com (assuming the classical computer and quantum computer search every key at the same time), it would take “a classical computer one year to crack 64-bit encryption, it would take a quantum computer 7.3 millisecond”. 40

With advanced algorithms, quantum computers can clearly break our most secure cryptosystems. However, quantum computers aren’t quite at that stage yet and until there is a major quantum computing breakthrough, our encryption systems will thankfully not be broken anytime soon. This at least gives us some time to ‘upgrade’ the encryption algorithms. For the AES algorithm, one way of doing this is simply increasing the key size, our most secure AES keys currently use 128-bit keys, and these are effectively impossible to crack even with our most powerful supercomputers. Upgrading to 256 keys would provide an exponential increase with possibilities. 41 To make RSA keys ‘quantum proof’, the solution, again, would be to make the RSA keys longer. A 1.25×1013 bit key (or a terabyte) is estimated to be resistant to a quantum computer running Shor’s Algorithm according to a paper on the Cryptography ePrint Archive. Whilst a terabyte sized key would save RSA from being stomped out by quantum computers it would by no means be easy to implement, it would take days to encrypt and decrypt a message using a terabyte key. However even this drastically large key is “extremely precarious”, according to Scott Aaronson, the director of the Quantum Information Centre at the University of Texas and the new sized keys would be “vulnerable to even a modest improvement in algorithms or hardware, or a determined and well-funded-enough adversary.” 42

Wired magazine described quantum computing as ‘the next big security risk’ 43 for a good reason. Quantum computers are finally moving out of laboratories and nations around the world are beginning to invest in research and development of quantum computers for their own use. If a rogue state were able to create an early quantum computer, it would have the capability of easily hacking into and obtaining state secrets. In a similar way which the Y2K bug was expected to cause havoc on computing systems across the world, experts are calling the countdown to quantum as Y2Q 44.

The introduction of quantum computers will without doubt force a change in the way we encrypt our files. Quantum computers have the potential to be a serious threat to the security systems of the world and it is only a matter of time before governments around the world create working versions. The huge impact which quantum computers will have on encryption will require a response from cryptographers to defend our current systems before it is too late. The problems discussed above with RSA keys being too long would likely not be a problem for quantum computers as they will have a significant speed boost. While the threat remains remote, industry and governments should remain vigilant and strengthen encryption systems while there is still time. Quantum computers will not only cause a revolution in cryptography, they will force it.

Space Travel:

There is no doubt that computers are and have been an essential part of space travel and space exploration. Whilst the computers which sent man to the moon at the end of the 20th Century were less powerful than today’s smartphones, in their day those computers facilitated and drove NASA’s space program. The Apollo Guidance Computer was one of the first embedded computer systems and allowed the astronauts inside the spacecraft to control it using basic commands such as nouns and verbs 45. It is unequivocal that without the computer space travel would be a fantasy and the world would be a very different place. Returning to the present day, interest in space exploration is now being pursued in the private sector with Elon Musk’s SpaceX successfully launching their Falcon Heavy rocket in February 2018; a rocket which ‘can lift more than twice the payload of the next closest operational vehicle, at one-third the cost’ 46.

Computing and space exploration go side by side and with a new revolutionary rocket it would only make sense for a new revolutionary computing system to go side by side with it. For space travel, quantum computers would likely be used to solve optimisation problems and NASA wants to use them to help with automated planning and scheduling 47. This involves using artificial intelligence to aid in engineering project management and something NASA would likely use planning missions to other planets such as Mars. SpaceX already has missions to Mars scheduled to begin in 2022 and with public interest in these missions peaked there can be little doubt that private space travel could play a huge part in driving the computer industry let alone the tourism!

Quantum computers would also likely be used for optimisation tasks such as sending robots to another planet. This type of endeavour would require the careful balancing of many variables including the lack of real time instant communication with mission control on Earth and the robots millions of miles away. Quantum computers would be used before the mission to forecast what could happen in the mission and plan how the autonomous robots would deal with it. 48

It is looking more likely that a new, more privatised space race is about to erupt and just like the previous one a new emerging technology (the computer) was pivotal in the success of all space operations. Given a few years, the quantum computer is going to be a major asset for space agencies competing in this modern race, allowing them a more optimised and carefully calculated space travel for a safer journey. The quantum computer is perhaps going to be as revolutionary and as essential to the space race as the classical one was back in the 1960s.

Finance:

The financial industry across the world has been forever transformed by the invention of the classical computer. Almost every single financial operation is run by computers from simple communication links between financial offices to trading on global stock exchanges, financial modelling of economies, programmed transactions to monitor a company’s financial operations and programs that track complex financial operations and transactions easily.15 Although the financial sector is already dominated and run fairly well by computers there is room for the introduction of quantum computers. Optimisation is what quantum computers are particularly good at and many financial operations demand that facility. Andrew Fursman is the CEO and cofounder of 1Qbit, a computer software company which specialises in building specialised software for quantum computers. According to Singularity Hub, Fursman considered the possibility of creating a perfectly optimised portfolio, saying: “Consider building a portfolio out of all the stocks in the S&P 500. Given expected risk and return at various points in time, your choice is to include a stock, or not. The sheer number of possible portfolio combinations over time is mind boggling. In fact, the possibilities dwarf the number of atoms in the observable universe. To date, portfolio theory has necessarily cut corners and depended on approximations. But what if you could, in fact, get precise? Quantum computers will be able to solve problems like this in a finite amount of time, whereas traditional computers would take pretty much forever. The work is already underway to make this possible” 49.

Singularity Hub also highlighted the potential use of quantum computers to make finance ‘more scientific’ 50. The problem with our global modern markets is how unpredictable they are. It is impossible to predict with any great certainty incoming highs and lows and therefore impossible to see where the best place is to invest your savings. The article mentions Marcos Lopez de Prado, a senior managing director at Guggenheim Partners and a research fellow at Lawrence Berkeley National Laboratory Computational Research Division, and that at the Exponential Finance Summit he mentioned two big problems with financial research 51. These problems were due to how we model finance, with the first being how we lack controlled setting were experiments can be run and instead we must observe real world events and make models based on them, despite these events not being reproducible. The other problem lies in how the financial models we do create are often far too simple to be realistic, the millions of variables which need to be kept track of are extremely challenging even for a super computer. But, this shouldn’t be a problem for a quantum computer which would be able to simulate a financial model accurately, being able to consider the millions of variables which go on simultaneously. Being able to simulate these models with an almost life like accuracy would allow investors to run their potential portfolio through millions of different financial scenarios to see if the risk they are going to take will ultimately be worth it. It could also lead to a reduction in economic peaks and troughs giving the governments and individuals a better chance of planning and protecting themselves against swings in the economy.

It would likely be impossible for quantum computers to be more revolutionary than classical computers in finance. Computers dominate this scene already and they can handle most tasks given to them easily. Like medicine, there are some gaps which need to be filled and, again, like medicine these areas involve simulation and optimisation. Solving these areas will no doubt result in a positive impact, hopefully resulting in a more stable economy with little to none market crashes. Quantum computers are going to play an essential role in refining the financial sector, bringing massive improvement but not quite causing the massive change that the introduction of the classical computer did.

Part III – Conclusion: Are quantum computers revolutionary?

The classical computer is arguably one of the greatest things to come out of the 20th Century and it has forever changed humanity. Almost every corner in modern society is touched by the computer, making all our lives easier, healthier, and more enjoyable. It can be used as a tool for learning and discovering or as a canvas for creating or as a toy for playing. The classical computer has not slowed down but continued to grow with us, getting smaller yet evermore powerful, efficient, and smaller. The pace of our current microchip technology is simply not sustainable, and we may be looking at the last 20 years of exponential computer growth. And then what? Quantum computing could be the answer to this or at least part of the solution – our classical computer infrastructure is embedded into our way of life and one would think that a new way of computing would need to work alongside the old at least initially until a transition plan can be worked out.

Will quantum computers become the next logical step in computing and replace their inferior classical counterparts? This final part of the essay will analyse what has been mentioned above and draw a conclusion into whether quantum computers will be a truly revolutionary system.

What has been established from the start of this essay is that quantum computers work in a fundamentally different way to classical computers, no more bits – now qubits. The qubits are computational representations of atoms or some other subatomic particle were they can take advantage of quantum mechanics, allowing the qubits to be in a superposition, meaning they can take the value of 0 or 1 or any other value in between at the same time 52. This ability to act in a superposition allows quantum computers to complete millions of tasks at one time as opposed to a classical computer which can only perform one task at a time. Tasks which were once unsolvable by classical computers, theoretically, should be solved easily by quantum computers and herein lies the potential revolution in the computer industry. Examples listed in Part II included things such as optimising medical treatments for patients and creating advanced financial simulations are untouchable by even our most powerful supercomputers but easily manageable by a quantum system. Quantum computers could herald a seismic change in areas of life bringing massive benefits and very real risk reduction further enhancing human life.

Quantum computing is only in its infancy and the examples above are the ones which have been tested using early quantum computers or theorised by experts. If we had quantum computers as readily available as classical computers, the only limit for what quantum computers could achieve is the imagination. It could help cure diseases, create true artificial intelligence, discover the origins of the universe, predict the weather and create a more automated future.

Whilst some of the concepts of what quantum computers could do are theoretical, the machines themselves are real and available for purchase. D-Wave is the first manufacturer of quantum computers and has sold them to companies like Lockheed Martin, Oak Ridge National Laboratory and The Quantum Artificial Intelligence Lab, a collaboration between Google, NASA and the Universities Space Research Association 53. What is extremely impressive about D-Wave’s computers is that they continue to release new more powerful models and have managed to double the number of qubits in their quantum computers every two years 54, perhaps starting a new era of Moore’s Law for quantum computers. Their most recent quantum computer, the D-Wave 2000Q, features 2000 qubits and was sold to the cybersecurity firm Temporal Defence Systems for approximately $15 million. The D-Wave is meant to outperform a classic server by a factor between 1000 and 10,000 55. With a footprint of 3 x 2 x 3H meters, the D-Wave requires an ‘extreme, isolated environment’ with a ‘refrigerator and layers of shielding to create an internal vacuum with a temperature close to absolute zero (-273°C or 0K) isolated from external magnetic fields, vibration, and RF signals of any form’ 56. Quite a precise piece of equipment and clearly not meant for the everyday user! The 2000Q is cooled with liquid helium refrigerant in a closed-loop system, allowing the system to operate at a temperature 180 times colder than interstellar space 57. Even more impressive is the amount of power the machine pulls, taking only 25kW from the wall 58. Most this power goes to operating the cooling and the front-end servers which allows for control of the computer but considering what this computer aims to accomplish, especially compared to classical supercomputers the power consumption is incredibly impressive, with D-Wave saying that a traditional supercomputer draws over 2500kW of power.

D-Wave is an industry leader in quantum computers and are creating some of the most advanced systems we have ever seen. There are however still some problems with their machines. First the price, $15 million may not be much for a giant corporation like Google or NASA but for a small indie developer it will likely be impossible for them to get a quantum computer and experiment with it. Being commercially available is something which has greatly aided the development of the classical computer. Even the internet has grown because of its accessibility and affordable entry price. Second, how they are used; the quantum computers currently available are predominately used to solve optimisation problems. This is clearly shown through the research done for this essay, the areas which I investigated in Part II mostly suggested that quantum computers will impact these areas by solving optimisation problems such as optimising financial portfolios and cancer treatment. Optimisation is by no means a small thing and will certainly have an impact by improving efficiency, but it is not something that will flip the industry on its head.

As said previously, quantum computers in their current state are extremely expensive and only the real high earning tech companies can afford to own one and therefore programmers and scientists have only had limited exposure to these machines, resulting in mostly theoretical and guesswork when it comes to what quantum computers can do. The companies which are able to afford these machines and become early adopters of this exciting technology have all said promising things about the future which quantum computers could bring, Hartmut Neven, Director of Engineering at Google said ‘We believe quantum computing may help solve some of the most challenging computer science problems, particularly in machine learning’; Lockheed Martin’s chief technical officer Ray Johnson said ‘this is a revolution not unlike the early days of computing. It is a transformation in the way computers are thought about.’ and Mark Anderson of the Laboratory’s Weapons Physics Directorate said ‘As conventional computers reach their limits in terms of scaling and performance per watt, we need to investigate new technologies to support our mission. Researching and evaluating quantum annealing as the basis for new approaches to address intractable problems is an essential and powerful step and will enable a new generation of forward thinkers to influence its evolution in a direction most beneficial to the nation’.

I personally believe that quantum computers will without a doubt cause a computing revolution but not in the way the classical computer did. In 25 years’ time I highly doubt that a quantum computer will be found in every modern home and even in place of work, in the same way that the classical computer is now. Instead, I imagine that the quantum computer will be used more as a tool for researchers, physicists, biologist, scientists and engineers to solve problems that would seem to be unsolvable in this current day and age. This is no small step as some of humankind’s greatest mysteries are currently confined to the laboratory and understandably so as they are extremely complex – such as space, black holes, the origins of the universe, quantum mechanics or even the human body. These mysteries, whilst fascinating to people generally, require a scientific mind and now more than ever, scientific equipment to solve. Quantum computers can answer these questions and doing so they will not only be revolutionary devices but create a new age of prosperity and scientific understanding.

Bibliography:

2: https://www.computerhope.com/issues/ch000984.htm

3: https://www.pcmag.com/encyclopedia/term/53649/vacuum-tube

4: https://www.intel.co.uk/content/www/uk/en/history/museum-story-of-intel-4004.html

5: https://www.youtube.com/watch?v=WhNyURBiJcU

7: https://en.wikipedia.org/wiki/Intel_4004

8: https://en.wikipedia.org/wiki/Transistor_count

9: https://www.amd.com/en/products/cpu/amd-ryzen-7-1800x

11: ibid 1

12: https://www.anandtech.com/show/12271/intel-mentions-10nm-briefly

14: https://spectrum.ieee.org/semiconductors/devices/the-tunneling-transistor

15: ibid 14

16: https://www.doc.ic.ac.uk/~nd/surprise_97/journal/vol4/spb3/

17: http://www.physics.org/article-questions.asp?id=124

18: Quantum Computation and Quantum Information by Michael A. Nielsen & Isaac L. Chuang

19: ibid 18

20: ibid 18

21: ibid 18

22: ibid 18

23: ibid 18

25: https://plus.maths.org/content/how-does-quantum-commuting-work

26: ibid 25

27: https://www.thoughtco.com/what-is-quantum-entanglement-2699355

28: https://www.cnet.com/news/physicists-prove-einsteins-spooky-quantum-entanglement/

29: ibid 25

30: ibid 25

31: https://www.universetoday.com/36302/atoms-in-the-universe/

32: http://www.explainthatstuff.com/quantum-computing.html#

33: http://semiengineering.com/introduction-to-quantum-computing/

34: https://atelier.bnpparibas/en/health/article/quantum-computing-set-revolutionise-health-sector

35: http://www.sciencemag.org/news/2017/09/quantum-computer-simulates-largest-molecule-yet-sparking-hope-future-drug-discoveries

36: http://searchsecurity.techtarget.com/definition/RSA

37: https://www.digicert.com/TimeTravel/math.htm

38: https://support.microsoft.com/en-us/help/246071/description-of-symmetric-and-asymmetric-encryption

39: http://searchsecurity.techtarget.com/definition/Advanced-Encryption-Standard

40: https://en.wikipedia.org/wiki/Shor%27s_algorithm

41: https://betanews.com/2017/10/13/current-encryption-vs-quantum-computers/

42: ibid 40

43: https://www.quantamagazine.org/why-quantum-computers-might-not-break-cryptography-20170515/

44: https://www.wired.com/story/quantum-computing-is-the-next-big-security-risk/

45: https://www.newyorker.com/tech/elements/hacking-cryptography-and-the-countdown-to-quantum-computing

46: http://www.computerweekly.com/feature/Apollo-11-The-computers-that-put-man-on-the-moon

47: http://www.spacex.com/falcon-heavy

48: https://gigaom.com/2015/02/13/how-nasa-uses-quantum-computing-for-space-travel-and-robotics/

51: https://singularityhub.com/2016/06/13/how-quantum-computing-can-make-finance-more-scientific/

52: ibid 50

53: https://computer.howstuffworks.com/quantum-computer1.htm

54: https://dwavefederal.com/customers/

55: https://en.wikipedia.org/wiki/D-Wave_Systems

56: https://www.wired.co.uk/article/d-wave-2000q-quantum-computer

57: https://dwavefederal.com/app/uploads/2016/12/D-Wave-2000Q-Tech-Collateral_0117F2.pdf

58: ibid 56

59: ibid 56

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allRelated Content

All TagsContent relating to: "Computing"

Computing is a term that describes the use of computers to process information. Key aspects of Computing are hardware, software, and processing through algorithms.

Related Articles

DMCA / Removal Request

If you are the original writer of this dissertation and no longer wish to have your work published on the UKDiss.com website then please: