Concurrent Direct Assessment of Foundation Skills for General Education

Info: 8300 words (33 pages) Dissertation

Published: 2nd Feb 2022

Abstract

Purpose

The foundation skills required by employers that will enable graduates to integrate and devise promising solutions for the challenges faced by knowledge societies are life skills (communication skills, teamwork and leadership skills, language skills in reading and writing, information literacy), transferable skills (such as problem-solving including critical thinking, creativity, quantitative reasoning), and technology skills (search for knowledge and build upon it). Foundation skills, however, are recognized as difficult to both teach and assess. We describe a performance assessment method to assess and measure these skills in a uniquely concurrent way – the General Education Foundation Skills Assessment (GEFSA).

Methodology

The GEFSA framework comprises a scenario/case describing an unresolved contemporary issue, prompts to engage student groups in on-line discussions, and a task-specific analytic rubric to concurrently assess the extent to which students attain the targeted foundation skills. The method was applied in three semesters – during 2016 and 2017. These students were non-native English speaking students in a General Education program at a university in the United Arab Emirates.

Findings

Results obtained from the rubric for each foundation skill were analyzed and interpreted to 1) ensure robustness of method and tool usability and reliability, 2) provide insight into, and commentary on, the respective skill attainment levels, and 3) assist in establishing realistic target ranges for General Education student skill attainment. The results showed that the method is valid and provides valuable data for curriculum development.

Originality/Value

This is the first methodin published literature that directly assesses the foundation skills for General Education students simultaneously. It provides educators valuable data on the skill level of the students and additionally it is a good teaching tool for the skills.

Keywords: 21st century skills; measurement; performance task; rubric; transferable skills, soft skills

Introduction

Proficiency in foundation skills is critical for success in 21st century knowledge economies. The foundation skills required of fresh graduates by employers are life skills (skills that assist in developing potential solutions to relevant problems, such as communication skills, teamwork and leadership skills, language skills in reading and writing, information literacy), transferable skills (skills that facilitate application of existing knowledge to new situations, such as problem-solving, critical thinking, creativity, quantitative reasoning), and technology skills (such as the ability to search for knowledge and information, comprehend, analyze and discuss advanced use of technology and build upon it) (United Nations Development Programme (UNDP), 2014).

Employers around the world value these skills from entry-level employees as much or more than specific disciplinary knowledge which can rapidly change (Association of American Colleges and Universities (AAC&U), 2013; UNESCO, 2012). In addition, the majority of quality assurance organizations at the professional programmatic level and institutional accreditation at the regional or national levels now require programs to show evidence of student attainment of foundation skills outcomes. Examples include, but are not limited to: ABET (formerly known as the Accreditation Board for Engineering and Technology), the Commission for Academic Accreditation in the United Arab Emirates), Middle States Commission on Higher Education, the Quality Framework Emirates and the Australian Quality Framework.

To increase the success of fresh graduates becoming the future knowledge workers in knowledge economy workplaces, tertiary education must include ample development of foundation skills. These skills are consistent with the skillset considered essential by the Organization for Economic Cooperation and Development (OECD) across all countries for success in living and working, plus employability skills in the information age such as teamwork, problem solving, and self-management (Organization for Economic Cooperation and Development (OECD, 2008).

The challenge surrounding the foundation skills is that employers value them, students are under prepared in them, and they are considered difficult to teach and assess (National Academies Press (NAP), 2017; Shuman, Besterfield, Sacre & McGourty, 2005). Thus, there is a need for practical and accurate teaching and assessment methods of these skills. Many measurement instruments evaluate each skill distinctly from one another. Incongruent measurement tools not designed to complement one another are insufficient for data-driven curriculum decision making (Schoepp, Danaher & Ater Kranov, 2016). These constraints (need to elaborate – as only one constraint mentioned) can be problematic for accurate and useful assessment of attainment of skills because they do not provide direct measures of deeper learning that integrates multiple outcomes. The method described in this paper enables precise, concurrent and actionable skill development and assessment to address these challenges.

This paper summarizes the development of the General Education Foundation Skills Assessment (GEFSA) over a two-year institutional research incentive funded project and describes the findings to-date to create the GEFSA as a robust direct method to demonstrate student attainment of foundation skills for program-level assessment purposes in a General Education environment (i.e. pre-major) at a large public university in the United Arab Emirates (UAE). The paper then proposes an extension to the GEFSA framework which would cover a complete baccalaureate program. Such an extension would allow assessment of students’ skill levels at relevant checkpoints throughout their baccalaureate experience.

Regional Context

The assessment of student learning outcomes is crucial when discussing issues of pedagogy, higher education accountability, and employability of fresh graduates. In short, what have graduates learned at the end of their undergraduate education and how do institutions of higher education know that with reasonable accuracy? This learning outcomes focus is also underway in the Middle East Gulf Region as stakeholders continue to advance the priority of student learning and employability.

Regional governments have recognized the strategic role that a highly skilled and educated workforce can play (UAE, 2010; UAE, 2014). Youth unemployment is a serious issue in the Arab region. Unemployment rates among the (Arab) youth (27%) significantly exceed the global rate (12.6%) (UNDP, 2014). As costs for education have far outstripped inflation, and as consumers (primarily students and their families) have become more educated and demanding, there have been increasing calls for evidence that what is being promised is being delivered. Regionally, the core mission of higher education is ensuring that students become productive members of society with the requisite skills for gainful employment. However, numerous reports point to a notable misalignment between the knowledge, skills, and attitudes demonstrated by university graduates in the Middle East, and in particular the Gulf region, and those desired by employers (UNDP, 2012; UNDP, 2015; Ernst & Young (EY), 2015).

Of 100 employers surveyed for Perspectives on GCC Youth Employment 2014, only 29% percent thought that the public educational systems adequately prepare graduates for the workplace (EY, 2014). Yet, in striking contrast, 68% of UAE youth surveyed thought that the educational system prepared them well for entry-level positions. The set of recommendations from this report are daunting, urging governments to “reform national skills and education models… and rethink how education is provided to deliver the ultimate objective of work-ready young adults” (EY, 2014, p.11).

At our public university in the United Arab Emirates we confront the same graduate skills competency and employability issues. Admirably, the UAE government has been proactive in attempts to address future youth employment. In 2010 the UAE government charted the UAE 2021 National vision. This vision to be one of the best countries in the world is underpinned by four pillars, one of which is a Competitive Knowledge Economy (UAE, 2010). Later, the UAE 2021 vision set national performance indicators (UAE, 2014), two of which relate to the knowledge economy and employment of national youth in the private sector to alleviate citizen unemployment. Respectively, the targets for 2021 are a 5% Emiratization rate in the private sector (from 0.65% in 2013), and the share of Emirati knowledge workers in the workforce to increase from 20% in 2013 to 40%.

Our university is a UAE federal institution with campuses in Abu Dhabi and Dubai that primarily serves Emirati nationals in a gender-segregated environment, with most courses conducted in English. With a student population of approximately 9,500 (8,000 female, 1,500 male), nearly all of whom are undergraduate students aged between 18 and 22 with no work experience, the university strives to deliver programs that meet international standards to ensure graduating students are prepared to contribute to and promote the social and economic wellbeing of UAE society and the professions. Related to these ambitions, the university was established in 1998 as an outcomes-based institution with a focus on quality. This quality commitment has been manifested through attainment of a number of international accreditations. In 2008, the university first became accredited by the United States (US)-based Middle States Commission on Higher Education, and since that time has achieved accreditation through the Association to Advance Collegiate Schools of Business, National Council for Accreditation of Teacher Education, and ABET (formerly known as the Accreditation Board for Engineering and Technology). Our university’s commitment to an outcome-based approach to education and continuous improvement through assessment of student learning outcomes was recognized officially by the US-based National Institute for Learning Outcomes Assessment.

Our university’s institution-wide learning outcomes, Zayed University Learning Outcomes (ZULOs), are intended to be used by colleges to assess student attainment of associated outcomes for both continuous improvement and quality assurance reporting purposes and to guide program curriculum development. The six ZULOs are: Information Literacy (IL), Technological Literacy (TL), Critical Thinking and Quantitative Reasoning (CTQR), Global Awareness (GA), Language (LA), and Leadership (LS), as illustrated in Table 1.

Table 1. Zayed University Learning Outcomes (ZULO’s)

| Critical Thinking and Quantitative Reasoning (CTQR): Graduates will be able to demonstrate competence in understanding, evaluating, and using both qualitative and quantitative information to explore issues, solve problems, and develop informed opinions. |

| Leadership (LS): Graduates will be able to undertake leadership roles and responsibilities, interacting effectively with others to accomplish shared goals. |

| Technological Literacy (TL): Graduates will be able to effectively understand, use, and evaluate technology both ethically and securely in an evolving global society. |

| Language (L): Graduates will be able to communicate effectively in English and Modern Standard Arabic, using the academic and professional conventions of these languages appropriately. |

| Global Awareness (GA): Graduates will be able to understand and value their own and other cultures, perceiving and reacting to differences from an informed and socially responsible point of view. |

| Information Literacy (IL): Graduates will be able to find, evaluate and use appropriate information from multiple sources to respond to a variety of needs. |

The University College (UC) is responsible for teaching and assessing student attainment of the ZULOs over the course of a mandatory General Education program and all courses are taught in English by faculty across disciplines. The General Education program at Zayed University offers a foundation education experience to students and prepares them for their future majors and eventual employment. The experience is intended to instill in the students a desire for lifelong learning, foster intellectual curiosity, and engender critical thinking. The General Education program initiates the baccalaureate careers of all ZU students. Students over a duration of three semesters take courses in global studies, English writing, mathematics, information technology and science in addition to life skills and innovation/entrepreneurship courses. Because the ZULOs are institutional learning outcomes, they are necessarily broad which, in turn, makes it challenging for instructors to design learning interventions and assessments for meaningful measurement of ZULO attainment in courses. To meet this challenge, the GEFSA has been designed and implemented as a performance assessment task directly aligned with the ZULOs. A definition of each GEFSA foundation skill A through F and the ZULO alignment is outlined in Table 2.

Table 2. GEFSA Rubric Skills, Definitions and Alignment with ZULO’s

| GE Foundation Skill A [ZULO CTQR].Demonstrate competence in understanding and evaluating information (qualitative and/or quantitative) to solve problems and propose solutions. Definition: Students clearly frame the problem(s) raised in the scenario with reasonable accuracy and identify approaches that could address the problem(s). Students recognize relevant stakeholders and their perspectives. |

| GE Foundation Skill B [ZULO LS] Interact within a group to accomplish shared goals.

Definition: Students (guided by supplied prompts) work to understand the task, and develop a solution. Students work together to address the problems raised in the task by acknowledging, building on, critiquing and clarifying each other’s ideas to come to consensus to attain a group solution. Students encourage participation and respect of all team members. |

| GE Foundation Skill C [ZULO TL] Understand and evaluate technologies and their use ethically, and where appropriate, securely in an evolving modern society.

Definition: Students can understand the use, describe the responsibilities and have an awareness of the ethical issues of technology use in modern society, which may include, but are not limited to: the social and security considerations |

| GE Foundation Skill D [ZULO LA] Comprehend and communicate using academic and professional language conventions.

Definition: Students adopt appropriate reading and writing strategies to communicate effectively. Students communicate clearly, coherently and concisely, with appropriate level of professional diction and tone. Students are able to develop main ideas with sufficient detail and explanation, drawing upon accurate comprehension of information. Students demonstrate accuracy of grammar and mechanics. |

| GE Foundation Skill E [ZULO GA] Examine a global issue, propose solutions, and assess impact locally on individuals, organizations, and society.

Definition: Students analyze the local implications of both the problem and possible solutions on individuals, organizations, and society within the UAE. |

| GE Foundation Skill F [ZULO IL]Locate, evaluate and use relevant information to respond to a variety of situational needs.

Definition: Students refer to and examine the information and reliability of sources. Students identify what they know and do not know and show an ability to provide additional sources (primary and secondary) to support the discussion and extend their knowledge. |

The GEFSA method

The GEFSA is a performance assessment. Performance assessments are designed to elicit and measure complex thought processes necessary for deep learning by asking participants to solve realistic or authentic problems. Participants in a performance assessment demonstrate evidence of their knowledge and application of it by developing a process or a product. A performance assessment typically has three components: (a) a task that elicits the performance; (b) the performance itself (which is the event or artifact to be assessed); and (c) a criterion-referenced instrument, such as a rubric, to measure the quality of the performance (Johnson, Penny & Gordon, 2009). Accordingly, the GEFSA comprises: (a) a scenario and prompts as an on-line discussion performance task; (b) the student team discussion transcripts as responses; and (c) the GEFSA analytic rubric as the criterion-referenced instrument to measure the quality of the student team performance of foundation skills. To ensure the robustness of the performance assessment, four support instruments were developed: 1) scenario development guidelines, 2) scenario prompts, 3) a student survey tool, and 4) a faculty survey tool.

A scenario describes a current real-world issue which is complex with many facets and has no clear solution. Credible news sources and academic articles are utilized as sources. The scenarios are written in accordance with the GEFSA scenario guidelines (Table 3) in order to ensure that each individual scenario elicits the GEFSA skills equally. The scenarios are checked against the guidelines by at least three reviewers and are amended if necessary before approval for use. Sample scenario topics include: obesity and the economy; conflict minerals; clean energy; cybersecurity; energy critical elements; water resources; e-waste; control of the web; privacy online. An example of scenario with the topic of Clean Energy is provided in Appendix A.

The GEFSA evolved as an adaptation of an existing performance task assessment – Computing Professional Skills Assessment (CPSA) (Danaher, Schoepp & Ater Kranov, 2017) – that was designed to evaluate the professional skills for both course and program assessment purposes in the field of computing. The GEFSA design steps and associated support instruments are outlined in Rhodes, Danaher, Ater Kranov & Isaacson (2016) and survey feedback from student and faculty is presented. The surveys showed that both students and faculty were convinced that the method contributes to the improvement in foundation skills.

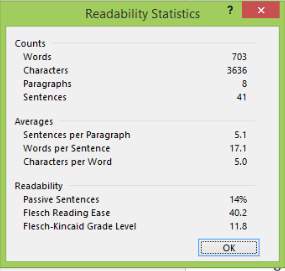

The GEFSA features four aspects that, together, make it unique in existing foundation skills assessments: (1) it is the only direct method in literature that can be used to both teach and measure the targeted learning outcomes for General Education students, (2) the scenario content language readability statistics are at or below 12 on the Flesch-Kinkaid scale so that it can be used for students entering tertiary education students of varying levels, abilities and backgrounds; (3) it asks students to connect global issues to the local country context (in this case the UAE), (4) it assists students in the process of moving from a rote learning approach traditionally taught in secondary school in the UAE and many countries to a more active, problem-solving approach that will enable greater success in increasingly global university settings. In addition, discipline specific colleges and/or programs can use GEFSA data to inform curricula and facilitate student transition from General Education programs to individual majors.

Table 3. Scenario creation guidelines

| Not focused or dependent on one discipline | The scenario involves more than one discipline. The issue/problem in the scenario should be appropriate to be tackled by an interdisciplinary group at any level in the program. |

| Complexity | The complex and multifaceted scenario has multiple stakeholders including public, private and global groups, and individual constituents. The diversity of stakeholders is representative of a problem with ethical, societal/cultural, economic, environmental, and global concerns. Any solution requires all critical stakeholders to be on board with the solution(s). |

| Real and relevant problem | The scenario has some kind of unresolved problem, tension, a disagreement, or competing perspectives on how to address the problem. The problem is not written in a way that intentionally bias readers or plays on their emotions. The problem will be relevant for five to ten years. |

| Context | The scenario must be both local and global. The goal is to show students that the problem presented is a local/regional problem and also an international problem. Local/regional examples and international examples must be given. |

| Quantitative Data | The scenario includes some quantitative data for students to interpret as they tackle the problem. |

| Elicits engagement | The scenario draws in the reader and engages the student group in deep discussions because the problem is complex and multifaceted without an obvious, quick fix solution. |

| References | The scenario has references (around 5) from varied sources such as refereed journal articles, solid news sources, and publications from professional societies. The selection of references is objective and balanced. |

| Appropriate for course use | The scenario is suitably complex for a 14-day online discussion. There should be no pictures or tables. Lists are acceptable. The written text must be no more than 1.5 pages, 12-point font, and 1.5 spacing, (600—700 words total, excluding references), and an F-K level not exceeding 12. |

The GEFSA task-specific analytic rubric was designed to be used by raters in evaluating the extent to which student groups attained the targeted foundation skills. As an example, one skill from the rubric is shown in Table 4 in a slightly condensed form where definitions of the outcomes and rater comment fields are omitted.

Table 4 GEFSA Rubric for Skill B – Interact within a group to accomplish shared goals

Spring 2016 Pilot Study Process

During Spring semester 2016 the GEFSA was implemented twice for 14-day periods in two General Education courses totaling 47 3rd semester students using scenarios on Clean Energy, and Obesity and the Economy. To ensure participation the activity was a mandatory course requirement and was graded. Students were placed randomly in groups of 5 to 6. The first run of the activity was primarily to familiarize the students with the process because they had not previously experienced discussion boards or problem-solving in teams in a similar manner. This practice intervention, often referred to as scaffolding, is considered essential and is an excellent learning activity as the students get to practice the foundation skills. Students were given instruction and prompts to guide their discussion (see Table 5 below).

Following completion of the task the student transcripts were distributed to each member of the rating team. The rating team participated in a calibration session where the goal was to come to a common understanding of the GEFSA rubric’s individual dimension descriptors and agree on what constitutes evidence of a given descriptor and scale level as represented in the student work. Calibration of rating teams is a critical aspect of ensuring both the reliability of the raters’ scores and accuracy of the rubric to differentiate between outcomes, as the process of calibration typically leads to refinement of the rubric (Holmes & Oakleaf, 2013; University of Hawaii Manoa, 2013). Because the rubric was in its developmental stages, complete rater consensus was not expected. During the calibration process, scores from each of the raters were recorded and the mean was calculated (rounding up was applied if necessary) to generate the overall score for each of the outcomes.

Rubric development and use experts tend to agree that the levels of agreement between raters should be 70% or greater, so this was the target for both the complete instrument and the individual outcomes (Stemler, 2004). Inter-rater reliability was calculated through the simplest of methods- a simple count of cases receiving the same ratings divided by the total number of cases.

The discussion board transcripts were analyzed for both student performance and refinement of the rubric. The rating process was conducted as follows: each team member scored the discussions of the groups on the skills making notes on the transcript and the rubric page; then the team compared scores and discussed all differences at length to reach consensus; during the discussion, notes were made on areas for rubric improvement.

Spring 2016 Pilot Findings

The attainment levels on the rubric are 0 – Missing, 1 – Emerging, 2 – Developing and 3 – Practicing. Our rubric was designed with the expectation that students by the end of their General Education studies (3rd semester) would score around 2 or be in the lower end of the range 2 to 3. The results obtained in Spring 2016 are as follows and reveal lower levels of skill achievement than expected: A (Critical Thinking and Quantitative Reasoning) -1.7; B (Leadership) – 1.5; C (Technological Literacy) – 1.1; D (Language) – 2.0; E (Global Awareness) – 1.5; F (Information Literacy) – 1.0. (In the above sentence, as a convenient reference, we have given the ZULO counterpart in brackets after the skill letter).

These results were not necessarily surprising as this was the first time the GEFSA had been used by students and faculty. We see the results as a baseline measure and the scores revealed areas for improvement in student learning, curriculum and teaching practice that need to be addressed. For example, in information literacy the students scored particularly low, so the reasons for this should be investigated and interventions planned and enacted.

The rating team observed that constraints imposed by the first drafts of both the scenario prompts provided to the students and the rubric used by the raters could also be factors contributing to the lower ratings. In order to determine the necessary interventions, we investigated first the scenario prompts. When a scenario is given to students it is necessary to provide prompts to guide the students in their work. The prompts are designed so that they follow typical steps in problem solving and particularly that they elicit responses that are indicative of performance on all six skills. We noticed a number of misalignments between the prompts and the rubric. The rubric prescribes that students work towards reaching a consensus to identify possible solutions; students identify, where possible, any social and security issues; students consider possible disadvantages for proposed solutions; students should identify the local impacts of the scenario problem on various constituencies. These aspects were not clear in the scenario prompts given to the students in Spring 2016 so the prompts were revised to those shown in Table 5.

Table 5. Discussion Board Prompts

Next, we investigated the rubric. When using the rubric we found that it was difficult for the raters to apply it consistently to students’ posts with confidence. This was due to two factors: (1) attainment levels 1 and 2 shared a common criterion for the performance indicators making it difficult at times for a rater to decide whether to assign a 1 or a 2, and (2) wording of the criteria at any or all attainment levels for the respective skills and their performance indicators was sometimes ambiguous, sometimes vague and sometimes insufficient for a rater to confidently assign a rating. To address these issues specific criteria were written for each of the levels and were reviewed to ensure vertical alignment across rubric outcomes and levels, as well as horizontal alignment as articulated across the levels 0, 1, 2 and 3 for each outcome and performance indicator. This was done for each of the six foundation skills outcomes of the rubric. One skills on the revised rubric is shown in Table 4 above.

Fall 2016 Findings

In Fall 2016, the GEFSA was conducted twice over 14-day periods in two classes totaling 45 3rd semester General Education students with scenarios on E-Waste and Cybersecurity using the same methodology previously described for the Spring 2016 pilot process. As before, the discussion board student transcripts were analyzed for student performance, and also to determine whether the modifications made to student discussion board prompts and to the rubric as described above were effective and/or whether further refinements were needed. We expected results to be around 2 or in the lower end of the range 2 to 3 – essentially most students to be at the developing stage (2) of each of the foundation skills, with some perhaps at the practicing stage (3). The results from the revised GEFSA rubric, presented below in Table 6, reveal higher levels of skill achievement compared to Spring 2016.

The attainment levels are in the target range for skills A, B and D (problem solving, leadership and language). Attainment levels for skills E and F are still low, indicating areas for improvement in global awareness and information literacy. This indicates that a pedagogical intervention is needed to investigate a possible underlying curriculum gap causing the low achievement. Skill C, technological literacy, at an attainment level of 2, is at the low end of the target range. This could indicate that students are using technology to acquire information, but not necessarily then building upon it.

Since students in the Spring 2016 and Fall 2016 groups were all from semester 3, we believe the higher levels of attainment are a positive reflection of the interventions applied to previously identified constraints with the task prompts supplied to the students, and the refinements/improvements made to the rubric. These revisions facilitated a more consistent application of the rubric by the raters.

Table 6. Scores on GEFSA for 1st and 3rd semester students.

| Skill (with ZULO counterpart in brackets) | 1st Semester | 3rd Semester |

| A. (Critical Thinking and Quantitative Reasoning) | 0.5 | 2.22 |

| B. (Leadership) | 0.6 | 2.17 |

| C. (Technological Literacy) | 0.8 | 2.00 |

| D. (Language) | 1.7 | 2.44 |

| E. (Global Awareness) | 0.73 | 1.56 |

| F. (Information Literacy) | 0.8 | 1.94 |

Spring 2017 Findings

The method was implemented with 1st semester students in Spring 2017. It was expected that there would be noticeable differences in attainment levels of 1st semester students compared to the 3rd semester students. We expected average ratings for foundation skills of 1st semester students to be around 1 – emerging. The activity was conducted twice over 14-day periods in two classes totaling 30 students using scenarios on Obesity and the Economy, and Cybersecurity. As before, the student transcripts for the second discussion were analyzed using the same version of the rubric as for Fall 2016. The results from our rubric are given in Table 6 and reveal, as expected, lower levels of skill achievement compared to 3rd semester students.

Future Work

The method will be implemented in coming semesters for the purposes of both assessing and teaching the skills and for the ongoing validation and reliability of the GEFSA rubric for use as program assessment in the General Education program. Future work will be as part of a newly funded research project to develop a University Foundation Skills Assessment (UFSA) framework by extending the core analytic rubric of GEFSA building upon the experiences of the past years. As previously mentioned, the GEFSA is an adaption of the CPSA used for major students in the College of Technological Innovation. The CPSA rubric has a continuum of six levels (0 – 5) to indicate student attainment of the professional skills within the context of IT majors.

The GEFSA rubric has a shorter continuum of four levels (0 – 3) to indicate student attainment of foundation skills within a General Education program context. The skills on the CPSA rubric are based on the ABET (the accreditation body for engineering and technology) required skills and are somewhat similar to the GEFSA skills which are aligned with the ZULOs. Our research will examine each level and will include adding two attainment levels – maturing and mastering – as in the CPSA. The maturing level is where students are generally expected to be at graduation. The mastering level is the expectation for postgraduate students. Additionally, the research plans to identify minimal attainment foundation skill levels for all students at relevant checkpoints during their baccalaureate program. Suggested levels are shown in Table 7 with comments.

Table 7. Baccalaureate skill level expectation points

| Measurement point in Baccalaureate | Skill level expectation | Comment |

| Year 1 – beginning of General Education (sem. 1) | 1.0- | Students mostly in the lower end of the emerging band with some weaker students in the missing band |

| Year 2 – end of General Education (sem. 3) | 2.0- | Students mostly in the lower end developing band with some weaker students in the upper end of the emerging band as they complete their General Education requirements. |

| Year 3 – semester 2 (sem. 6) | 2.5+ | Students mostly in the upper end of the developing band and some in practicing as they complete the 3rd semester in their major. |

| Year 4 – semester 2 (sem. 8) | 3.5+ | Students mostly in the upper end of the practicing band and some in maturing as they graduate from their major. |

The proposed attainment levels will provide additional data points as input for college-wide curricular development and pedagogical practices. The vision is that these levels can be realized across the institution as the proposed UFSA framework is migrated to the various colleges in the university.

Conclusion

The enabling of young people to develop solid foundation skills through focused educational interventions and assessments plays a significant role in increasing their societal impact and individual satisfaction. If we associate knowledge with economic progress, then building a knowledge society and a knowledge economy in the UAE, and the broader Arab region, is a necessity for prosperity and international competitiveness in this current age of globalization. With the establishment of a knowledge society and knowledge economy it is incumbent to provide youth with the foundation skills and competences required for their active integration as knowledge workers in the 21st century workplaces. (UNDP, 2014; EY 2015)

This paper outlined how the GEFSA can provide the opportunity for a concurrent direct assessment of student attainment of foundation skills in a General Education program. The results from the most recent GEFSA implementations have demonstrated that the method can measure the skills with confidence. The method will be further refined to inform recommended skill attainment levels at entry and exit points in the university’s General Education program to indicate expected progression in skill attainment over time. This will enable General Education courses to begin to address shortcomings identified in foundation skills through application of the GEFSA. Further work is being planned to develop an institutional foundation skill assessment framework by extending the GEFSA rubric. This would be used to identify and measure attainment of foundation skills levels for students at various points during their baccalaureate program.

Acknowledgement: This research work is funded by the Zayed University Research Incentive Fund – R15128

References

Association of American Colleges & Universities (2013), It takes more than a major: Employer priorities for college learning and student success. Washington, DC: Association of American Colleges and Universities.

Danaher, M., Scheopp, K. & Ater Kranov, A. (2016). A new approach for assessing ABET’s professional skills in computing. World Transactions on Engineering and Technology Education, 14(4).

Ernst & Young (2015). How will the GCC close the skills gap? Retrieved May 22, 2017 at: http://www.ey.com/Publication/vwLUAssets/EY-gcc-education-report-how-will-the-gcc-close-the-skills-gap/$FILE/GCC%20Education%20report%20FINAL%20AU3093.pdf

Ernst & Young (2014). Perspectives on GCC youth employment. Retrieved May 22, 2017 at: http://www.jef.org.sa/files/EY%20GCC%20Youth%20Perspectives%20on%20Employment%20JEF%20Special%20Edition%202014.pdf

Johnson, R.L, Penny, A.J. and Gordon, B. (2009). Assessing performance: Designing, scoring, and validating performance tasks. New York: The Guilford Press.

Holmes, C., & Oakleaf, M. (2013). The official (and unofficial) rules for norming rubrics successfully. Journal of Academic Librarianship, 39(6), 599- 602.

Organization for Economic Cooperation and Development. (2008). Overview of policies and programmes for adult language, literacy and numeracy (LLN) learners, in Teaching, Learning and Assessment for Adults: Improving Foundation Skills, OECD Publishing, Paris, France. Retrieved May 22, 2017 at: http://dx.doi.org/10.1787/172281885164

Rhodes A., Danaher, M., Ater Kranov A, & Isaacson, L. (2016). Measuring attainment of foundation skills in general education at a public university in the United Arab Emirates”, World Transactions on Engineering and Technology Education, 14 (4).

Schoepp, K., Danaher, M. & Ater Kranov, A. (2016). The computing professional skills assessment: An innovative method for assessing ABET’s student outcomes. Proceedings from the 2016 IEEE Educon, Abu Dhabi, United Arab Emirates.

Shuman L. J., Besterfield-Sacre, M. & McGourty, J. (2005). The ABET “professional skills” Can they be taught? Can they be assessed?” Journal of Engineering Education, 94, pp. 41–55.

Stemler, S, E. (2004). A comparison of consensus, consistency, and measurement approaches to estimating interrater reliability. Practical Assessment, Research & Evaluation, 9(4).

UAE. (2010). United Arab Emirates Vision 2021. Retrieved May 22 at: http://www.vision2021.ae/en

UAE. (2014). National key performance indicators: UAE 2021 Vision. https://www.vision2021.ae/en/national-priority-areas/nkpi-export-pdf

UNESCO. (2012). Skills Gaps Throughout the World. Retrieved May 22 at:

http://unesdoc.unesco.org/images/0021/002178/217874e.pdf

United Nations Development Programme. (2012). Arab knowledge report 2010/2011: Preparing future generations for the knowledge society. Retrieved May 22 at: http://www.undp.org/content/dam/rbas/report/ahdr/AKR2010-2011-Eng-Full-Report.pdf

United Nations Development Programme. (2015). Arab knowledge report 2014: Youth and localisation of knowledge. Retrieved May 22, 2017 at: http://www.arabstates.undp.org/content/rbas/en/home/library/huma_development/arab-knowledge-report-20140.html

University of Hawaii Manoa. (2013). Creating and using rubrics. Retrieved May 22, 2017 at: http://manoa.hawaii.edu/assessment/howto/rubrics.htm.

Appendix A: Complete Scenario – Clean Energy

Experts generally agree that the large amounts of carbon dioxide (C02) and other greenhouse gasses which are produced when coal and oil are burned are a direct cause of global warming. Worryingly, C02 levels are now increasing at record rates. According to the National Oceanic and Atmospheric Administration (Noaa) 2015 saw the biggest jump in CO2 levels for 56 years. It should be no surprise that 2015 was also the hottest year on record.

One of the major sources of CO2 around the globe is transport, accounting for 37% of all CO2 production in the US. Moves to reduce transport-related CO2 emissions generally involve electricity. Some projections estimate that approximately 1.8 and 1.2 million electric vehicles will be sold in China and the US respectively in the year 2020. However, electric cars are not a solution to our growing CO2 problem if enormous amounts of CO2 are produced when we generate electricity.

Some of the world’s smaller and less industrialized countries have made great progress towards the goal of completely clean energy production. In Uruguay, 94.5% of all electricity is produced cleanly using a combination of technologies. Hydropower plants, produce approximately 40% of Uruguay’s power needs. The country also uses wind power, biomass and solar power generation. Wind and solar generators produce no CO2 or other greenhouse gasses. Biomass power generation, which involves the burning of plant material, does produce C02 but is considered a clean source of energy because these plants have removed CO2 from the air during their lifetime.

In more industrialized countries, the move to clean electricity production may be more complicated. The US target to generate 20% of its electricity using renewable sources by 2030 is seen as ambitious by some. The UAE’s 2030 goal is for 30% clean electricity.

Clean energy solutions will vary in the MENA region. Morocco, for example, uses both hydropower and wind power. However, hydropower is not possible in the UAE for obvious reasons, and assessments of wind speeds in coastal regions show that wind power generation is not an option. Solar power, on the other hand, is well-suited to the region. The UAE already runs photovoltaic (PV) power generation plants, using PV panels which change the sun’s rays directly into electricity. Concentrated solar power (CSP) systems, using mirrors to heat water which then generates electricity, are also popular in the MENA zone. Masdar’s Shams 1 CSP power plant, producing 100MW of power, was the largest in the region until February 2016, when Morocco’s Noor 1 CSP plant opened. Noor 1 currently produces 160MW and there are plans to expand this capacity before 2018.

Another interesting approach to solar power in this region involves private PV generators. A Dubai-based company, Emirates Insolaire, has developed the idea to build PV glass panels into the outer walls of larger new buildings. The company is currently installing this technology in a revolutionary new building in Copenhagen. The building will generate half of its own electricity. Emirates Insolaire hope to bring this technology to new buildings in the UAE very shortly.

However, both PV and CSP have some negatives. The harsh and dusty local climate can shorten the life of PV panels and can also reduce productivity, as has been shown with the SHAMS 1 plant. Moreover, dependence on one power generation source can be unwise. For example, Bhutan, which relies on hydropower, generates more power than it needs for much of the year, but has to import electricity from neighbouring India during the dry season.

A final option may be oil itself. Environmentalists argue that we should “keep it in the ground”; that if we use all the available oil, the effect on CO2 emissions and on our climate would be disastrous. However, for some years now it has been possible to “capture” the CO2 which produced in oil-fired power plants. This carbon is then buried deep underground. With further research, this option, known as carbon capture and storage (CCS), may be an attractive alternative to GCC countries. According to Ali Al-Naimi, the Saudi oil minister, CCS technology would allow oil producers to become a “force for good”. CCS also has obvious economic advantages, as it would allow oil producers to profit from their greatest natural resource.

Sources

Ash, K. (2015, July 9) President Obama announces new renewable energy targets, but we can and must do more. Greenpeace International. Retrieved from; http://www.greenpeace.org/international/en/news/Blogs/makingwaves/President-Obama-renewable-energy-climate-legacy/blog/53473/

Flamos, A. (2015) The Timing is Ripe for an EU-GCC “Clean Energy” Network. Energy Sources, Part B: Economics, Planning and Policy, 10:3, pp. 314-321.

Howard, E. & Carrington, (2015, March 15) Everything you wanted to ask about the Guardian’s climate change campaign. The Guardian. Retrieved from; http://www.theguardian.com/environment/2015/mar/16/everything-you-wanted-to-ask-about-the-guardians-climate-change-campaign

McAuley, A. (2016, January 20) UAE eyes new clean energy generation target by 2030. The National. Retrieved from; http://www.thenational.ae/business/energy/uae-eyes-new-clean-energy-generation-target-by-2030

Pashley, A. (2016, March 11) CO2 levels make largest annual leap in 56 years – NOAA. Climate Home. Retrieved from; http://www.climatechangenews.com/2016/03/10/co2-levels-make-largest-annual-leap-in-56-years-noaa/

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allRelated Content

All TagsContent relating to: "Learning"

Learning is the process of acquiring knowledge through being taught, independent studying and experiences. Different people learn in different ways, with techniques suited to each individual learning style.

Related Articles

DMCA / Removal Request

If you are the original writer of this dissertation and no longer wish to have your work published on the UKDiss.com website then please: