Issues and Challenges in Component-testing in Component-based Software Development?

Info: 9991 words (40 pages) Dissertation

Published: 16th Dec 2019

Tagged: Computer Science

Chapter 4 Result

This chapter presents the results based on the systematic literature review conducted on the list of 51 papers. The chapter is divided into three main sections each section discussing in detail about the results obtained for the three research questions.

4.1 Research Question 1 – What are the issues and challenges in Component-Testing in Component-Based Software Development?

The answer for this research question was retrieved mainly by reading papers that included the keywords issues, challenges and problems. Moreover, many of the papers that discussed a certain component testing technique for component-based software development discussed how component testing can be challenging before diving into the main study of the paper. To answer this question, it is better to first understand the different groups of components. There are two main groups in which a component may belong. These groups are as follows-

- Commercial Components– These are the components that are developed by other vendors. The developers who make these components are known as ‘Component Providers’ and the organizations that make use of their components are called as ‘Component Consumers’.

- In-House Components– These are the components that are made by the same organization that makes use of them. So, in this case the component provider and the component consumers both are the same. This is done so as to reduce cost and speed up the development time by reusing certain components from one project to another.

Since component-based software paradigm makes use of reusable components to write software it is evident that the quality of the software will in turn be dependent on the quality of the software components and the effectiveness of the component’s unit tests, integration and system tests. According to [insert-citation] there are six essential issues in testing of software components. These are as follows-

- Test Suites for Software Components- Current commercial component makers do not provide their customers with any test suite or test information for any of the software components they sell. Not even any acceptance tests or quality reports. To check the quality of the components and understand its behaviours like how the component works and what are the input and output characteristics, customers must spend a lot of effort in understanding the provided components documents and then create a test suite and test driver to perform acceptance testing. For in-house component makers the engineers do create test suites for the components they are building but these test-suites makes use of inconsistent formats, various tools and repositories. This is to do with the fact that in an organization there are different teams handling different software projects and each team has their own tools and specifications based on the project that they are working and hence they cater towards that projects needs rather than building a universally reusable component with proper support for component testing. Hence this makes it really complicated to make use of these test-suites provided by these diverse engineering teams for reusability across multiple projects.

- Adequate Testing of Components- There are two primary issues related to adequate testing of components which are as follows-

- Most of the components that are developed in-house do not have adequate test-sets because they are designed on specific project requirements and functions. Reusing the component and their test sets in other projects without proper verification might cause more problems that are not anticipated.

- Current component vendors do not provide any information about their test criteria and test metric for their components. Therefore, it comes very complicated for the customers of these components to understand test coverage, and test quality.

- Poor testability of Reusable Components- [insert-citation] describes that testability of a software component are two folds-

- Observability- This indicates how easy it is to observe a program(or component) in terms of operational behaviours and outputs relating to the inputs.

- Controllability- This indicates how easy it is to control a program(or component) on its inputs, operations, behaviours and outputs.

From a customer’s perspective the testability of software components is poor because there are no external tracking mechanisms and tracking interfaces in software components for client to monitor and observe external behaviours. There is no support for controllable interfaces in software components to facilitate the execution of unit tests, checking the test results and reporting errors. Finally, there are no built-in tests or controllable test suites for software components to support blackbox testing at the unit granular level.

- Expensive cost on constructing component test drivers and stubs- Component based software paradigm makes use of reusable software components for building software products. To provide support for component testing engineers will have to write test drivers and test stubs. In a traditional software development paradigm engineers had to focus on one specific project and hence they could construct the test drivers and test stubs for that specific project based on the needs of the project and its specification. But when reusability is put forward as in the case with component-based software development this becomes costly and ineffective mainly due to the evolution of reusable components and their diverse functional customizations. In case of in-house component makers they usually make use of an ad-hoc approach to develop simple test drivers. By ad-hoc it means inconsistent test formats and test specifications that may work well with one project but not with others. In other words they are project or product specific and they do not have well defined interfaces between components and test suites.

- Issues of Component Integration- It is not easy to perform integration test suites using the component test suites. The reasons are as follows-

- Test suites are constructed in a manner that leads to test information formats that are inconsistent. Moreover, have diverse access mechanisms and different data storage technology. These affect the reuse of test suites in regards to integration of the components.

- No proper test selection methods available for building integration test suites based on component test suites.

- Ad-Hoc Component Testing process and Certification criteria- Most of the software workshops have some support for in-house quality control processes for the software products. When we shift from the traditional software development paradigm to component-based software paradigm there is a lack of confidence when it comes to the usage of the same control quality process that was used in the traditional model in the component model. Due to this there is a lack of standard component quality control process.

These six issues describe the main problems associated with component testing in the context of component-based software development. Hence, they highlight the fact that although component-based software development has its fair share of advantages with shorter development cycle and faster time-to-market, creating proper reusable components which are well tested are a complicated task. [insert-citation] describes the challenges for the software developers and testers who are making use of the component-based software paradigm. These challenges are as follows-

- How to provide reusability support for Component Tests?

Since component-based software development is the construction of software products by making use of reusable software components. The developers first challenge is to write component tests in a way that makes it reusable across a multitude of different projects. This is easier said than done as most projects have different requirements and needs hence coming up with a universal testing plan is complicated. Each project will have different technologies, database schemas, design patterns that it becomes difficult for the engineers to come up with a reusable test plan.

- How to develop testable components?

A testable software component is not only executable and deployable but also testable with proper support of standardized component test facilities. Testable components unlike their normal counterparts have the following features-

- Testable components must be traceable. [insert-citation] defines traceable components as the ones constructed with built-in-tracking mechanism for monitoring the behaviour of the component in a systematic manner.

- Testable components needs to have built-in interfaces to interact with the testing facilities. The interfaces needs to include the following

- A test-set up interface, which interacts with a component test suite that selects and configures black-box tests.

- A test execution interface that will interact with the component test driver or the execution tool.

- Some sort of test reporting functionality that will check and record test results.

- Testable components need to have a proper test architecture model and built-in test interfaces to ensure proper interactions to component test suites and component test-bed.

- How to develop component test drivers and test stubs in a well-defined manner?

Traditional software development paradigm makes use of test drivers and test stubs that are based on the requirements of the design specification. The major problem with this approach is that these test drivers and test stubs are only useful for that specific project. In component-based software paradigm, components have customization functions that allows engineers to modify them according to a set of requirements. This suggests that the traditional way of building component test drivers and test stubs will be ineffective. This poses the challenge of developing reusable test drivers and test stubs for a component.

- How to construct a generic and reusable component test-bed?

In Software Engineering Test-bedding is a method of testing a particular module which could be function, class or even a library in an environment that is isolated. The challenge here is to come up with a component test-bed technology that is applicable to components that are made in different programming languages and tech stacks.

According to [insert-citation] the challenges associated with component-testing in the context of component-based software development are from two different perspectives. These are the component consumer and the component provider’s perspective. The challenges have been summarised as follows-

- Code Inaccessibility- As evident from the understanding of the earlier issues and challenges component providers do not provide the source code of the components that they sell. This makes white-box testing really difficult because white-box testing makes use of the code and the implementation details to perform tests. These include data-flow based criteria and mutation testing. These methods need the source code in order to determine the test requirements.

- Different Programming Languages- In the context of component-based software even if the source code is available the individual components and the component-based system may have been developed using different languages. For example a project might make use of a component that is written in Java and another project might use a language like Python. Now there is a third project that needs to use these components from the first and the second project but since they are developed using two different programming languages with different technology stacks developers will need to make use of different tools to analyse and test these components and for a large scale project this can become complicated.

- Understanding Component Functionality- Since component vendors do not provide source code for the components that they sell, a component may have diverse range of functionalities that might not have been fully disclosed in the documentation. Hence there is a need to identify the functionality that are actually needed by the user. Without the proper identification of the functionality a testing tool will only provide useless reports.

- Lack of Information of Externally Provided Components- Component providers have to deal with the task of providing information for the components that they sell and this becomes a difficult task as different projects have different needs and since there is no standardized set way to provide information for a given component sometimes there could be some missing pieces which could be a real problem for the component consumer.

Hence the challenges associated with component testing in regards to component-based software development can be summarised with the following table.

| Area in component testing | Challenges |

| Component Testability |

|

| Component Test Suite |

|

| Test Stubs and Drivers |

|

| Certification and Quality Control |

|

| Component Integration |

|

| Test Environment |

|

| Code and Programming Language |

|

| Component Information |

|

4.2 Research Question 2 – What are the testing strategies employed in component-based software development?

Component-Based software development has gained quite a lot of attention from researchers around the world and have been used in different organizations with different needs and requirements to take existing monolithic projects and convert them into something more manageable using the component-based software paradigm. We know that a component-based software paradigm provides significant benefits for developers. It reduces the cost of the project as using existing components that are pre-built is economically more viable than starting from scratch. Second, the time-to-market is less. In today’s day and age, software has penetrated every single industry and the demand for greater and better software is ever increasing. To cope with this demand and to deliver high-quality products to the customers without delays, component-based software development is an enticing option. But testing is one area in component-based software development where a lot of active research is required. There are many different strategies that have been proposed over the years and hence the goal of this research question is to summarize what are these existing strategies. As these strategies can be diverse it was more logical to group them into a specific category. For example there are strategies that are based on a Test-Framework or a Test-Model hence we decided to take all these strategies and put them under Test-Framework or Test-Model after that we have provided a description of each of these strategies. The strategies used for component testing in component-based software development can be categorised into the following:

- Test Adequacy Criteria: According to [insert-citation], ‘’A test adequacy criterion is a systematic criterion that is used to determine whether a test suite provides an adequate amount of testing for a component under test”. What this means is that a test adequacy criteria is a systematic approach to determine whether the number of test cases for a given component that is under test is sufficient enough to fully test the component. This is useful because if for some reason a test suite fails to satisfy some criterion then this may provide some relevant insights about how to improve the test suite. We can consider an adequacy criterion as a set of test obligations. A given test suite satisfies an adequacy criterion if-

- All the tests pass

- The different test obligations in the criterion are satisfied by at least one of the test cases in the test suite.

To give an example, the statement coverage adequacy criterion is satisfied by a given test suite S for a program say P if each of the individual executable statement in the program P is executed by at least one of the test cases in S and the outcome of each of these test execution was a ‘pass’. In the list of 51 papers that we used in the study, there are four primary studies that delve into the topic of test adequacy hence it was evident to include a category for test adequacy. These papers mainly deal with a strategy to provide better test adequacy. These papers have been presented in the following table-

| Name of Paper | Author/Authors | Published in |

| Adequate Testing of Component Based Software | David S. Rosenblum | Department of Information and Computer Science, University of California, Irvine, Irvine, CA, Technical Report |

| Testing coverage analysis for software component validation | J. Gao, R. Espinoza and Jingsha He | 9th Annual International Computer Software and Applications Conference (COMPSAC’05) |

| Techniques for Testing Component Based Software | Ye Wu, Dai Pan and Mei-Hwa Chen | Proceedings Seventh IEEE International Conference on Engineering of Complex Computer Systems |

| A proposal for new software testing technique for component based software system | Bayu Hendradjaya | International Journal on Electrical Engineering & Informatics |

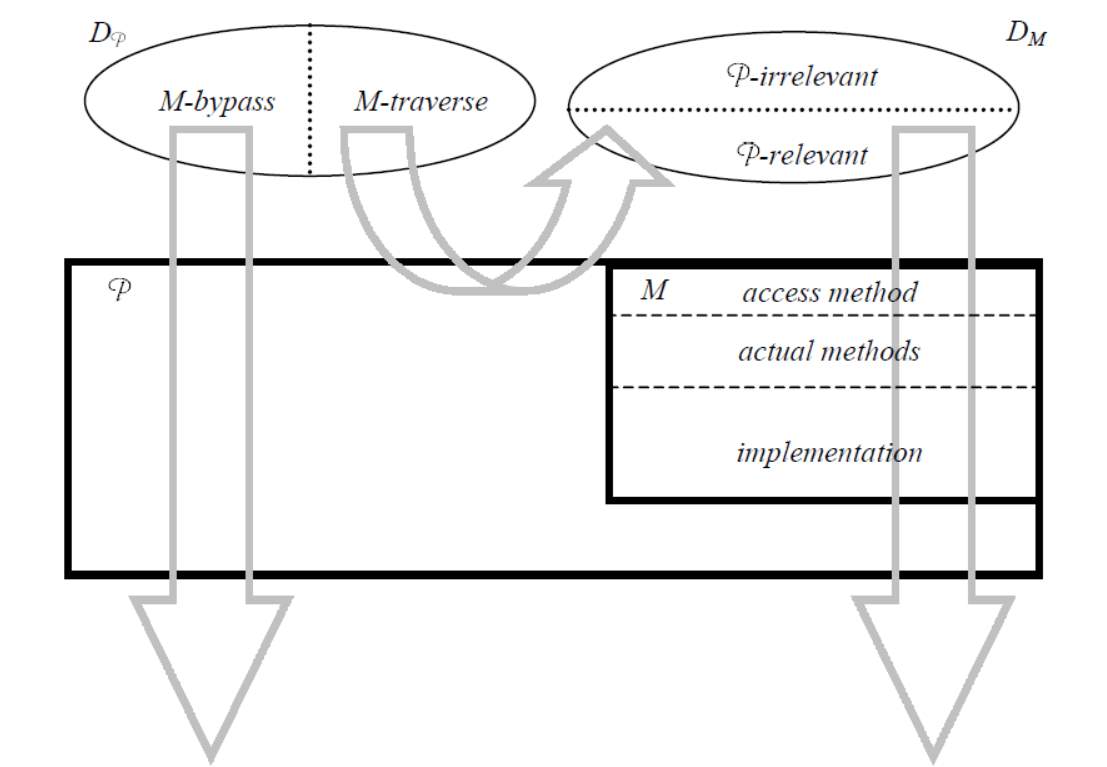

[insert-citation] describes a formal definition of concept ‘C-adequate-for-P’ for adequate unit testing of a component and the concept ‘C-adequate-on-M’ for adequate integration testing of a component-based system. The paper makes use of these concepts to discuss practical considerations in adequate testing of component-based software. The model defined in this paper with respect to program say P that contains a component M. The model can be generalized to be used for multiple components as well. This model can be applied in two different ways for the problem of testing in component-based software. These two different ways basically correspond to the unit testing and integration testing of components. The model defined in the paper is illustrated in the following figure:

Figure 1- Formal Model of Component Based Software [insert-citation]

The idea of a component used in this study is that of an object-oriented notion of a component. So in this case component M encapsulates some state and provides well defined interface that governs the access to this state by the other components in the system. Here we can see that M has declared something called an ‘access method’ in its interface. This ‘access method’ is responsible for the invocation of the actual methods. Each parameter in the actual methods of M have a corresponding parameter of the same mode and type in the access method. Besides this, the access method contains an additional parameter in order to identify the actual method that needs to be invoked. Hence the input domain of M is then the input domain of M’s access method. [figure-caption] represents the model used in the study. DP is the input domain of P and DM is the input domain of access method M. In the figure there are certain subsets of these input domains which are defined as-

M-Traverse(DP) = Execution of P on input D traverses M

M-Bypass(DP) = DP – M-Traverse(DP)

P-relevant(DM) = Execution of P on input d traverses M with input d

P-irrelevant(DM) = DM – P-relevant(DM)

“Execution of P traverses M” means the execution of program P includes at least one of the invocation of access method in M.

According to the study a test-set TP is said to be C-adequate if TP satisfies the test requirements for a test adequacy criterion C on program P. Similarly a test-set TM is C-adequate if TM satisfies the test requirements for a test adequacy criterion C on component M.

To conclude, unit-testing viewpoint requires the developer of M to test M with criterion C and test with a test-set that is C-adequate-for-P. Integration testing viewpoint requires the developer to carry on the test with a test set that is C-adequate-on-M.

[insert-citation] proposes adequate test-models and test-coverage criteria for component validations. The paper argues that validating a component quality needs proper adequate test-models and testing coverage criteria. The study makes use of a dynamic approach to analyse component test coverage based on what they propose as the test model and the test-coverage criteria. The study proposes two different test models CFAG (Component Function Access Graph) and D-CFAG (Dynamic Function Access Graph) which represents component’s API-based function access patterns in both the static and dynamic views. According to the study a test model serves three purposes:

- Helping engineers to define various test criteria.

- Facilitate the automatic test generation.

- Provide aid in test coverage analysis.

The study proposes three types of test coverage criteria for a component C with test models (CFAG and D-CFAG). They are:

Node coverage criteria: Component C has achieved node test coverage for node F if and only if its adequate test set has been used during a components test process

Link coverage criteria: Two components have achieved link coverage if they satisfy the test sets between the two components.

Path coverage criteria: Components have achieved path coverage if they satisfy the test sets in its data-flow.

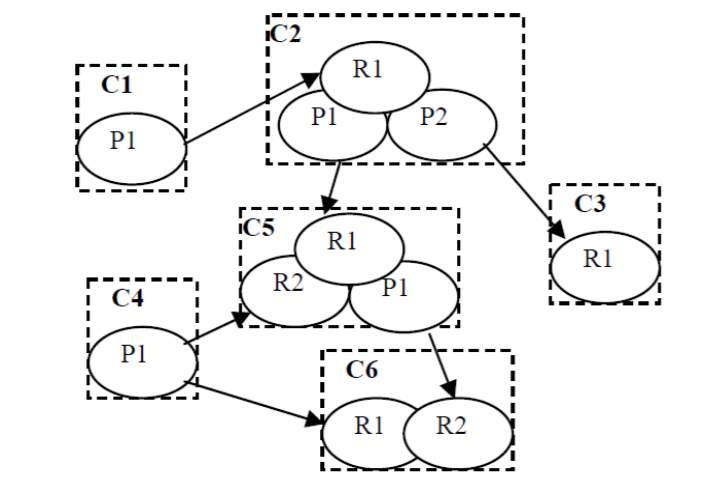

[insert-citation] uses a component-interaction graph where interactions and dependence relationships among the components are illustrated. By making use of the CIG, the paper proposes a family of test-adequacy criteria which allows the optimization of balancing the budget, schedule and quality requirements which are some of the important requirements in software development. The component interaction graph models the interaction scenarios of the different components. Direct interactions are made through a single event whereas indirect interactions are made through multiple events. An example of a component-interaction graph has been given by [insert-citation]

Figure 2Component Interaction Graph [insert-citation]

An edge for example between C1.P1 to C2.R1 here means the required service R1 of C2 has been satisfied by the providing service P1 of C1.

[insert citation] proposes a technique that helps determine test-adequacy criteria which is based on a set of complexity and criticality metrics of a component-based system. According to the study this test adequacy criteria helps in reducing the number of test cases. The metrics used in this study requires the CBS system to have graph connectivity where each nodes represents the components and links represents the connectivity between the components. The interactions will occur mainly through interfaces and events that are incoming. The testing technique makes use of the software functional specification which can be obtained from a design document. Using the software functional specification a test specification is made. The test specification makes use of test adequacy criteria which are based on complexity and criticality metrics. The test specification is then used to generate test scenarios. These test scenarios are then executed and finally analysed.

- Frameworks and Test Models: Based on the list of 51 papers that were extracted for this study there are 9 studies that propose a testing strategy based on a test framework or test model. The studies are shown in the following table-

| Name of Paper | Author/Authors | Published in |

| Model Based Software Component Testing: A UML Based Approach | Weiqun Zheng, Gary Bundell | 6th IEEE/ACIS International Conference on Computer and Information Science (ICIS 2007) |

| A framework for practical, automated black-box testing of component-based software | Stephen H. Edwards | Software Testing, Verification and Reliability |

| Framework for Testing Object-Oriented Component | Ugo Buy, Carlo Ghezzi, Allessandro Orso, Mauro Pezze, Matteo Valsasna | Proceedings. of the 1st International Workshop on Testing Distributed Component-Based Systems |

| Framework for third party testing of component software | Yu-Seung Ma, Seung-Uk Oh, Doo-Hwan Bae | Proceedings Eighth Asia-Pacific Software Engineering Conference |

| Test-Driven Component Integration with UML 2.0 Testing and Monitoring Profile | Donglin Liang, Kai Xu | Seventh International Conference on Quality Software (QSIC 2007) |

| A Framework for testing distributed software components | Yizheng Yao, Yingxu Wang | Canadian Conference on Electrical and Computer Engineering, 2005. |

| Proactive Model Based Testing and evaluation for component-based systems | A. Surendar, M. Kavitha, V. Saravanakumar | International Journal of Engineering and Technology (2018) |

| A formal approach to specifying and testing the interoperation between components | Il-Hyung Cho, John D. McGregor | Proceedings of the 38th annual on Southeast regional conference |

| Towards Self-Testing of Component-Based Software | F. Belli, C.J. Budnik | 29th Annual International Computer Software and Applications Conference (COMPSAC’05) |

[insert-citation] follows a different paradigm in developing constructive and effective component-test-cases with UML(Unified Modelling Language) to test software components. The paper describes that there are already some amount of research work conducted on the use of UML for software component testing but most of them are testing techniques that only provide general test requirements or criteria and don’t have any detailed and operational descriptions on how to actually apply them that will produce actual test cases. The main research goal for this study was to bridge the gap between software component development and software component testing with UML modelling. The paper mainly focuses on component integration testing which bridges component unit testing and component system testing. The process that the study follows is parallel in nature. It constructs the UML models along with the test models. For example, when it develops the use case model for the system it subsequently checks for the use case model by making use of a use case test model. Both the generation of use case model and use case test model happen in parallel. The process follows an iterative approach where if some problems arise either in testing or design it goes back and performs the model and test generation steps again.

[insert-citation] provides an outline of a general strategy for automated black-box testing of software components which includes the automatic generation of test drivers, automatic generation of black-box test-data and automatic or semi-automatic generation of component wrappers that are used as test oracles. The cornerstone of this automated-framework that is presented in this paper is a micro-architecture for providing built-in test support in software components. The paper proposes that each software component provider provide a hook interface where certain built-in testing capabilities can be made. This bit of built-in testing code will remain transparent to client and component code. This piece of built-in testing code can be inserted and removed without affecting the client code. Both the internal and external assertions of a given component’s behaviour can be checked.

[insert-citation] proposes a framework which is based on two kinds of analysis. The first phase considers each of the components in isolation. This phase will provide the definition of a set of method sequences for each of the components under test. The second phase considers a given set of interacting components by combining the method sequences of each of the interacting components. In the first phase data-flow analysis is applied to the methods contained in the component under test. Next, symbolic execution is applied to each of these methods so that a formal specification can be derived. Finally, automated deduction is implemented to derive series of method invocations from the data that was produced from the previous steps. The second phase makes use of incremental integration of components where it first identifies the order of integration that minimizes the scaffolding need by analysing the dependencies among the components. Next it performs pair-wise integration of the components based on the integration order.

[insert-citation] identifies metadata which is the information of a component itself that a component developer should provide to the third party tester and define the progress for third party testing on the basis of the usage of these metadata. The paper satisfies the constraint of evaluation of large number of components within a short period of time in a cost effective way. The metadata can be defined in three categories. a) Information to deploy the component b) Information to test the component c) Information to audit the test suite. With the aid of these metadata’s the testers can perform functional testing of the components without the need of implementation details of the component and generating test cases from scratch.

[insert-citation] proposes to apply a test-driven approach in component integration to improve the reliability of the glue-code that is written for the integration of software components in the component-based software paradigm. As with test-driven development the core idea is to write test-cases first and then design the product such that it passes all these test-cases. This is done to catch bugs and errors during the earlier stages of the software production.

[insert-citation] makes use of the UML 2.0 testing profile. The UML 2.0 testing profile extends the notations of UML 2.0 for specifying testing artefacts such as test behaviours, test-architecture and the test-data that are required to test the components. These artefacts can then be converted into test programs.

[insert-citation] provides a framework for distributed testing of software components which is based on CORBA architecture and Java technology. This paper enables an environment to allow the client-side software components to define test-cases for a black-box component that has been published on the server-side. The framework introduced in this study is incremental in nature hence it is cost-effective and economical. The framework allows the developers to build their own tests to fit the context of the component at all the stages of its life-cycle.

[insert-citation] primary goal is to improve the testing methods by proposing an optimization approach based on simulated annealing. The approach that the paper takes is to optimize the integration order which is the order in which the components will need to be integrated. Now deriving an integration order is an NP-hard problem hence the paper takes a heuristic approach based on simulated annealing. Simulated annealing is mainly applied to optimization problems due to fast convergence and ease of implementation for real world problems. Simulated annealing is primarily finding the global minimum of a cost function for an optimization problem.

[insert-citation] enhances the interoperable component model by adding formalism and providing a testing framework based on formal specification of the component. Hence each component has an interface that describes the component’s behaviour and type. The interactions of the component are achieved through message protocols. The study aims to strive for the goal of achieving plug and play components and for that proper techniques are required for understanding the functionalities of the component and how they inter-operate with each other.

[insert-citation] introduces a framework which automates user-oriented component testing. This utilizes the common features of commercial capture/replay test tools that helps in reducing the test costs. It is a black-box testing technique. The paper introduced ways to reduce the test cost of component testing by identifying and analysing manual activities during the test process to enable automation. The paper’s aim is to automate the time consuming and error prone manual testing activities like adequate, user-oriented test scripts based on special test cases. It does so by first identifying the objects of the graphical user interface of the component under test and creates a behavioural model in an intermediate format from which a component model can be generated. Based on the component mode test cases can be generated which can then be converted into test scripts in the systems programming language. Finally these scripts can be executed and analysed.

- Automated component testing: There are some studies that primarily focus on automating certain aspects of component testing whether it is the generation of the test scripts or generation of some results based on an algorithm. Automation is a key aspect in these studies. Based on the systematic literature review of the 51 papers we have the following papers that have been shown in the following table:

| Name of Paper | Author/Authors | Published in |

| Automatic generation algorithm of expected results for testing of component-based software system | Jeong Seok Kang, Hong Seong Park | Information and Software Technology (2015) |

| Automated unit and integration testing for component-based software systems | F. Saglietti, F. Pinte | Proceedings of the International Workshop on Security and Dependability for Resource Constrained Embedded Systems |

| Results from introducing component-level test automation and Test-Driven Development | Lars-Ola Damm, Lars Lundberg | Journal of Systems and Software(2006) |

| Deployed software component testing using dynamic validation agents | John Grundy, Guoliang Ding, John Hosking | Journal of Systems and Software(2005) |

[insert-citation] makes use of an algorithm that analyses input/output relationships of a component-based software system so that it can identify the inputs that influence the outputs. Then the algorithm uses the test cases of atomic components for each input and automatically generates the required results. The idea here is to automatically generate the actual results as efficiently as possible as they are needed to be compared with the results obtained from testing the given system. The manual generation of expected results is time consuming and error prone and this study aims to reduce that by automating this aspect. The approach follows two steps. The first step is to generate a complete set and reduced set of input test data. The reduced set is a subset of the complete set. The study first generate the complete set of the input test data and then automatically create an I/O relationship directed graph to generate the reduced set. The last step is to generate the expected results of the reduced set using test cases of the atomic components in the system.

[insert-citation] makes use of automated techniques for supporting the verification and validity of component-based software systems. It does so by optimizing the test case generation for both unit and integration testing. Unit testing approach makes use of static analysis of the source code determining the potential entities to be covered. Based on the static analysis a dynamic analysis is performed for automating the execution of each test case generated. For integration coverage criteria state-based transition systems were used. Each component was represented with a set of states. Component interactions are represented using a pair of transitions say (t,t’) connected by a casual relation such that traversing t in the first component results in traversing t’ in the other component. On the basis this a tool was developed enabling automatic generation of optimized integration test suites.

[insert-citation] presents the results of various industries implementing early fault detection i.e. automated component testing and test-driven development. This paper provides case studies comprising of four projects where it explains the concept of early fault detection and how it was applied on the first two projects which were compared against their respective predecessors. The paper argues that making use of fault-injection criteria in early stages of the development is beneficial in discovering faults early which could be beneficial in the long run.

[insert-citation] describes validation agents which can be implemented so that the testing of the software components can be automated. These validation agents utilize component aspects that describe the functional and non-functional cross cutting concerns which impacts software components. The component aspect information is queried by these validation agents and they construct and run automated tests on the software components. Basically these validation agents are programs that query parts of XML encoded aspects of components to formulate and run tests and check results that meet component requirements.

- Regression Testing: Regression testing is a type of testing that ensures that the functionalities of the program in the previous version are still working in the newer version. Component-based software development is making software products with the use of third-party components that could be procured from a component vendor or made in-house. But there are instances where the software systems need to change certain things based on user requirements and hence regression testing is required to ensure that the software components that worked previously are still working after the necessary changes have been made. Based on this there are certain studies that address this type of testing which are given in the following table-

| Name of Paper | Author/Authors | Published in |

| Using component metacontent to support the regression testing of component-based software | A.Orso, M.J. Harrold, D.Rosenblum | Proceedings IEEE International Conference on Software Maintenance. ICSM 2001 |

| Regression testing for component-based software via built-in test design | Chengying Mao, Yangsheng Lu, Jinlong Zhang | SAC ’07 Proceedings of the 2007 ACM symposium on Applied computing |

| A systematic state-based approach to regression testing of component software | Chuanqi Tao, Bixin Li, Jerry Gao | Journal of Software Volume 8, No-3 (2013) |

[insert-citation] provides two different metacontent techniques that address the problem of regression test selection for component based software. One of them is a code-based approach while the other is a specification-based approach. Code based approach selects test cases based on coverage goal in certain aspects of the code. The entities that can be selected for coverage include statements, edges, methods or classes and paths. These coverages are used as an adequacy criteria for a given test suite. If the coverage is higher more adequate is the test suite. Code based approach constructs a representation of the code in a control-flow graph for the program and record the coverage achieved by the original test suite. When modified version of the program arises based on certain modification the program itself the control-flow graph is generated again. The algorithm then compares the two representation to figure out the specific tests that needs to be executed again. The other approach that is specification based technique creates test cases based on a functional description of the given system. The second approach has four phases. In the first phase the testing team analyses the specification to identify the functional units in the given system. These includes parameters like inputs to the units and environment factors. In the second phase partitioning of the parameters and environment entities takes place. Third phase involves finding constraints among choices based on mutual interactions. Finally in the fourth phase the testing team develops test frames using the cross product of each different choices.

[insert-citation] provides an improved regression testing method based on built-in test design for component-based systems. This approach requires the involvement of both component developers and users. The component developers are required for analysing the given methods for a component and create test-interfaces for the same. The component users can select subset of the test-cases for regression testing with the help of these test interfaces.

[insert-citation] proposes a systematic regression testing technique for component-based software from the component level to the system level. It does so by constructing various retest models to support regression testing. The method employed in this study involves identifying the diverse changes made to components and system based on the models. After that perform a change impact analysis basically detailing what are the major changes that have taken place in the given component. Finally refresh the regression test suite by using a state-based practice for testing.

- Built-in Testing: Built in tests refers to some code that is added to an application that checks the application during runtime. There are several papers that discuss built-in testing for software components which are as follows-

| Name of Paper | Author/Authors | Published in |

| COTS component testing through built-in test | Frank Barbier | Testing Commercial Off-The Shelf Components (Book,Springer) |

| A method for built-in tests in component-based software maintenance | Yingxu Wang, Graham King, Hakan Wickberg | Proceedings of the Third European Conference on Software Maintenance and Reengineering |

| Research in testing COTS components-built-in testing approaches | Sam Beydeda | The 3rd ACS/IEEE International Conference onComputer Systems and Applications, 2005. |

| Built-In Contract Testing in Component Integration Testing | Hans-Gerhard Gross, Nikolas Mayer | Electronic Notes in Theoretical Computer Science |

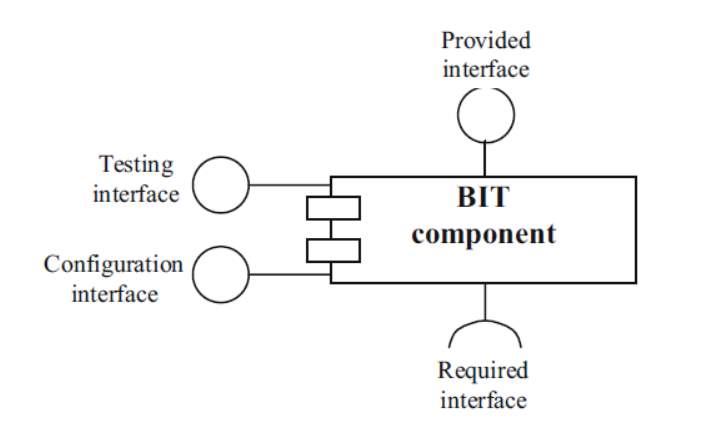

[insert-citation] proposes to provide commercial off-the shelf components with built-in testing material to increase component testability and configurability. It does so by using a testing interface for the given component that allows access to the inside of a COTS component and create scenario-based test operations from scratch. The following figure from the same study illustrates this approach.

Figure 3- Bit Component [insert-citation]

Here the testing interface allows access to the inside of the component helping to generate test cases. The provided interface is the interface provided for the component for interaction. The configuration interface is the interface meant for configuring the component with respect to the system.

[insert-citation] describes a new kind of software component with built-in testing for maintaining the software components. It discusses two modes for maintainable software which are normal mode and maintenance mode. In normal mode, the software will behave similar to the conventional system and in maintainable mode the built-in tests can be activated by executing the built-in tests at the corresponding level of the functions. In the maintenance mode the BIT capabilities allows the reporting of test results as well. These test results determine whether the test passed or not for the given component.

[insert-citation] provides an overview of the built-in testing approaches for software components and discusses advantages and disadvantages of the approach. It gives detailed description of a built-in testing approach known as component+ approach. The problem with built-in testing is that the test cases after generation have to be stored within the component. This may lead to increase in the size of the components and increased component complexity. To tackle this issue an architecture is proposed where there are three types of components which are BIT components, testers and handlers. BIT components are the built-in testing component. These components implement only the mandatory interfaces. Testers access the BIT enabled components through the help of the interfaces defined by the BIT components. Finally handlers are mainly used for recovery purposes.

[insert-citation] proposes an approach for reducing the manual system verification by using components that can check their execution environments at runtime. The main reason for this is that manual system verification is costly, time consuming and can lead to errors as well. However the paper does not give any insight to certain disadvantages to this approach especially in terms of resource consumption.

- Integration and Unit Testing: Unit testing and Integration testing are the fundamental approaches to testing in any software system. Here unit testing involves the testing of the individual software component while integration testing involves testing of these software components during integration. There are some studies that describe integration and unit testing strategies for software components. These are as follows-

| Name of Paper | Author/Authors | Published in |

| An observational theory of integration testing for component-based software development | Hong Zhu, Xudong He | 25th Annual International Computer Software and Applications Conference. COMPSAC 2001 |

| Unit testing of software components with inter-component dependencies | George T. Heineman | International Symposium on Component-Based Software Engineering |

| Structural Testing of Component-Based Systems | Daniel Sundmark, Jan Carlson, Sasikumar Punnekkat and Andreas Ermedahl | International Symposium on Component-Based Software Engineering |

[insert-citation] analyses the structure of white-box integration testing and proposes a family of integration testing methods for software components. This paper also proposes axioms for integration testing of concurrent systems. The paper does so by making use of the theory of behaviour observation to integration testing. The paper proposes some white-box integration testing for software components. The first method involves testing of component statements but not recording their execution sequences or information. This method is quite similar to a blackbox approach. The second method involves recording the testing information not only for non component related information but for component related events and parameters. The third approach is similar to the first approach as it records even more information of the statements in execution.

[insert-citation] discusses that one cannot directly apply test-driven development to component based softwares as component based softwares have different life-cycles which are a bit complex. The study proposes two different case studies in which TDD is applied in the context of component-based software engineering.

The paper identifies that software components have dependencies and hence it is difficult to apply a TDD approach for testing of software components. The paper introduces the concept of mock objects for unit testing of software components. These objects have clear expectation of the calls they are going to receive. By extension we can have mock components. These components need to be packaged, deployed and assembled into test applications.

[insert-citation] describes the extra test effort required by component test integration. It introduces the concept of compositionally introduced test items. This concept basically means that compositionally introduced test items are test items that given a certain test criterion that only exist on the current level of integration and above, they have two sources one is interaction between the integrated components and the other is the extra code added merely for the integration of the components.

- Others: There are studies that are too specific to be grouped into a certain category hence they are listed here. The papers that follow these unique testing strategies are provided in the following table:

| Name of Paper | Author/Authors | Published In |

| Component customization testing technique using fault injection technique and mutation test criteria | Hoojin Yoon, Byoungju Choi | Mutation testing for the new century (Springer) |

| Components have Test-Buddies | Pankaj Jalote, Rajesh Munshi, Todd Probsting | International Symposium on Component-Based Software Engineering |

| An approach for component testing and its empirical validation | Fernando R.C. Silva,

Eduardo S. Almedia, Silvio R.L. Meira |

Proceedings of the 2009 ACM symposium on Applied Computing |

| Components integration-effect graph: a black box testing and test case generation technique for component-based software | Umesh Kumar Tiwari, Santosh Kumar | International Journal of System Assurance Engineering and Management |

| Isolated testing of software components in distributed software systems | Francois Thillen, Richard Mordinyi, Stefan Biffl | Software Quality. Model-Based Approaches for Advanced Software and Systems Engineering |

| Towards incremental component compatibility testing | Ilchul Yoon, Alan Sussman, Atif Memon, Adam Porter | ACM Press |

| The Self-Testing COTS Components (STECC) Strategy – a new form of improving component testability | Sam Beydada and Volker Gruhn | Proceedings of the 29th Euromicro Conference (EUROMICRO’03) |

| Components based testing using optimization AOP | Haeng-Kon Kim, Roger Y Lee | Computer and Information Science 2010 |

[insert-citation] proposes a component customization testing technique by making use of fault injection and mutation test criteria. First, a component customization can include any activity that requires a modification of an existing component for reuse which includes modification of attributes or adding new domain specific functions. Software fault injection is a technique for performing tests by simulating faults at various points in the program code and by examining how the entire system behaves. Now in any given component there are two distinct sections. One is a white-box class and the other is a black-box class. The black box class means the core functions of the component and the source code of which is not disclosed therefore cannot be modified by the user. The white-box class means the interface part of the component where the source code is open to the component user and also the user can modify. The paper highlights the fact that where should the fault code be injected into the component system so that it is sufficient enough to test the component for faults. The paper proposes to inject fault code in between the white-box class and black-box classes. This fault injection is primarly done by looking at where the interaction between the white box classes and black box classes takes place and inject the fault code in that area. This interaction area is called the fault injection target and we define the fault injection operator which is responsible for injecting fault code into this area.

[insert citation] proposes that testing of other components often reveal the largest source of finding defects in a given component. The paper describes that there exists a small set of components called ‘Test Buddies’ whose testing reveals most of the defects found by testing the other components.

[insert-citation] presents an approach to support component testing with the sole goal of reducing the lack of information between the component producers and the component consumers. Two types of workflows are presented one for component producer and the other for component consumer. These workflows describes the list of activities that needs to take place so that the component producer can provide a component with enough set of information that the component consumer will need to use the given component based on their requirements. The component consumer workflow also describes the list of activities to perform so that they can test the component in a proper way.

[insert-citation] proposes a functional testing strategy and test case generation technique for component based software. This strategy is called Integration-effect graph and it is a blackbox technique. The integration-effect graph involves components interacting through request and response edges. These edges correspond to interacting and providing the required parameters. On the basis of integration effect graph an integraton effect matrix can be constructed which can be used for generating test cases.

[insert-citation] provides a new approach called ‘Effective Tester in Middle” which improves the testing of the components depending on other distributed components. This approach makes use of test scenario specific interaction models and network communication models which facilitate the testing of components in isolation without even running the entire system.

[insert-citation] describes algorithms to compute differences in the compatibilities of the components mainly between the current and the previous builds. The overall goal of this paper is to provide an approach for incremental compatibility testing for component based systems.

[insert-citation] proposes a strategy that addresses the needs of both the component provider and the component user. This involves to augment a component with functionality specific to testing tools. A component that has such functionality is capable of testing its own methods by conducting activities of the user’s testing process thus making it self-testing.

[insert-citation] proposes a way to reduce the redundant test cases for a given component. As a component can have different test scenarios there can be chances that some redundant test cases have also made it into the system. This paper makes use of formal concept analysis mechanism we could analyse the coverage of the test cases and eliminate the redundant ones.

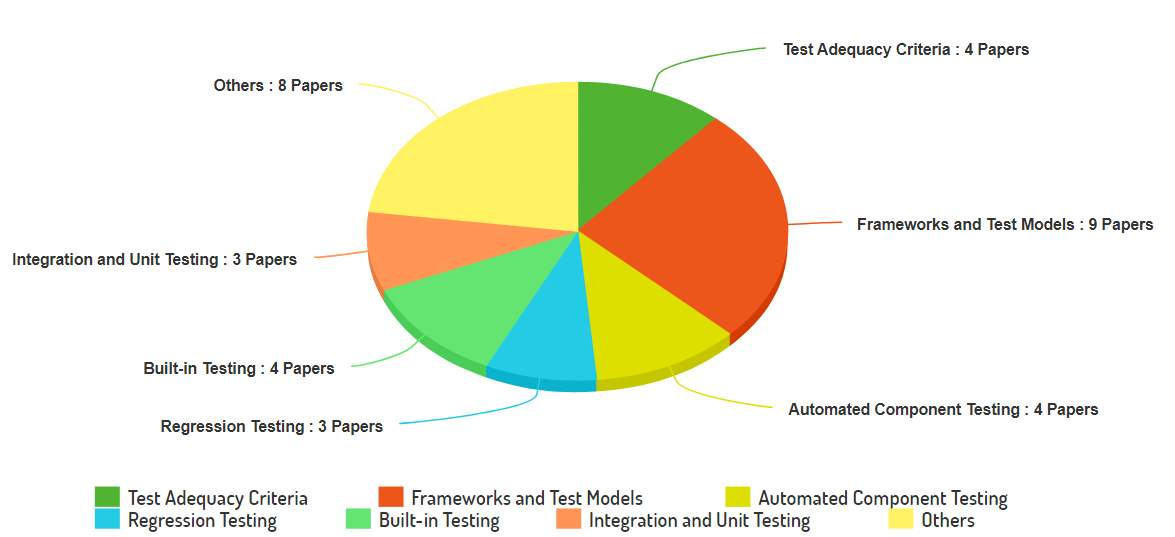

The distribution of all these papers in each of the categories have been illustrated with the following pie chart-

The chart shows that the testing strategies that are based on frameworks and test models are the most prevalent one as most of the studies focus on it. There are many studies that have a specific strategy that they follow for testing of software components and these studies cannot be easily labelled in any of the existing categories hence they are shown in the others section which has the second highest number of studies. The rest of the studies have either 3 or 4 papers.

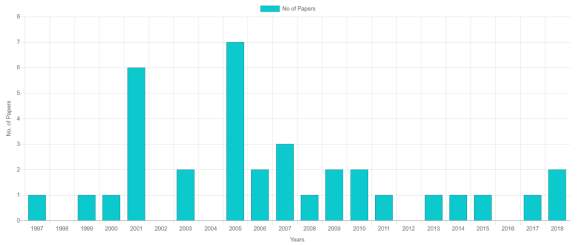

The distribution of the papers over the years have been shown in the following bar graph-

The distribution of the papers over the years have been shown in the following bar graph-

Based on the graph the year 2005 has the maximum number of papers followed by the year 2001. Another thing to note is that not much growth has been there after 2007 as the following years have only one or two papers that discuss a specific component testing strategy.

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allRelated Content

All TagsContent relating to: "Computer Science"

Computer science is the study of computer systems, computing technologies, data, data structures and algorithms. Computer science provides essential skills and knowledge for a wide range of computing and computer-related professions.

Related Articles

DMCA / Removal Request

If you are the original writer of this dissertation and no longer wish to have your work published on the UKDiss.com website then please: