Prediction for Big Data & IoT in 2017: Live Project-sentiment Analysis of Twitter Data

Info: 7001 words (28 pages) Dissertation

Published: 1st Mar 2022

ABSTRACT

Big data and therefore the alleged net of Things (IoT) are intimately connected: billions of Internet-connected “things” can, by definition, generate huge amounts of knowledge.

By 2020, the globe would have generated around forty zettabytes of knowledge, or 5,127 gigabytes per individual, per AN estimate by analysis firm International information corporation. It’s no marvel that in 2006, market man of science Clive Humby declared information to be “the new oil”.

Companies are sharpening their target analysing this deluge of data to grasp shopper behaviour patterns. A report by software package body Nasscom and Blueocean Market Intelligence, a world analytics and insight supplier, predicts that the Indian analytics market can cross the $2 billion mark by this yr.

Companies are victimization massive knowledge analytics for everything—driving growth, reducing prices, rising operations and recruiting higher folks.

In hospitals, intelligence derived from knowledge helps improve patient care through faster and additional correct diagnoses

A major portion of orders of e-commerce corporations currently come back through their analytics-driven systems. These corporations record the getting behaviour of consumers and customise things for them. Travel corporations, on their half, use knowledge analytics to grasp their customers—from staple items like their travel patterns, the sort of hotels they prefer to keep in, United Nations agency their typical co-travellers are, their expertises—all double-geared to giving the client a personalised experience consequent time the client visits the web site.

In hospitals, intelligence derived from knowledge helps improve patient care through faster and additional correct diagnoses, drug dose recommendations and therefore the prediction of potential facet effects. legion electronic medical records, public health knowledge and claims records are being analysed.

Predictive care victimization wearables to ascertain very important medical signs and remote medicine might cut patient waiting times, per a thirteen Gregorian calendar month report by the McKinsey world Institute. International Business Machines corps.

Many corporations globally and in India, as well as some start-ups, are exploitation machine-learning tools to infuse intelligence in their business by exploitation prophetical models. in style machine-learning applications embody Google’s self-driving automotive, on-line recommendations from e-commerce corporations like Amazon and Flipkart, on-line chemical analysis service kindling and streaming video platform Netflix.

Railigent, Siemens AG’s platform for the prophetical maintenance for trains, listens to the trains running over its sensors and might discover, from the sound of the wheels, that wheel is broken and once it ought to get replaced.

Predictive algorithms are utilized in accomplishment too. Aspiring Minds, for example, uses algorithms high-powered by machine learning that draw on information to deal with complicated issues—for instance, to accurately gauge the standard of speech in numerous accents against a neutral accent (also exploitation language processing). This helps corporations improve accomplishment potency by over thirty fifth and scale back voice analysis prices by fifty fifth.

There are still challenges in transfer concerning wider technology adoption.

“We typically realize that senior executives perceive the ideas around massive information and advanced analytics, however their groups have problem process the trail to worth creation and therefore the implications for technology strategy, operative model and organization. Too often, corporations delegate the task of capturing worth from higher analytics to the IT department, as a technology project,” the note recognized.

In the 2006 film reminder, enforcement agents investigate associate explosion on a ferry that kills over five hundred individuals, as well as an oversized cluster of party-going sailors. They use a replacement program that uses satellite technology to appear back in time for four-and-a-half days—to attempt to capture the terrorist.

Predictive policing is unquestionably not as advanced these days. And advances in prophetical analytics will actually raise moral problems. for example, the police could within the future be ready to predict World Health Organization may become a serial bad person, associated build associate intervention at an early stage to alter the trail followed by the person, as is that the case in reminder. Or associate underwriter could use predictions to extend the premium or maybe deny a user associate insurance.

Any troubled technology wants checks and balances within the variety of sensible policy if it’s to deliver to its potential.

TABLE OF CONTENTS

Click to expand Table of Contents

INTRODUCTION

CHAPTER ONE Big Data & IOT- Two sides of the same coin?

Big Data

Internet of Things(IoT)

Bringing the 2 practices along

The Challenges

CHAPTER TWO Big Data & your sector in 2017

Retail

Manufacturing

Telecom

Agriculture

Healthcare

CHAPTER 3 Big Data & IOT Predictions for 2017

Big Data and IOT can evolve

Top executives can assume an even bigger role

Cognitive era can emerge

Migration of mission-critical applications to cloud can increase

Review by experts

CHAPTER 4 Live Project Sentiment Analysis of Twitter Data

Abstract

Introduction

Hadoop

Approach

Real Time data and features

Part of Speech

Root Form

Sentiment Directory

Map-Reduce Algorithm

Time Efficiency

Conclusion

REFERENCES

INTRODUCTION

2016 has been a year of transition for several CSPs. we’ve seen varied NFV projects initiated and new 5G developments proclaimed on a monthly basis. This transition appearance set to continue into 2017 as information monetization becomes a reality, prediction technology advances and therefore the construct of ‘data for social good’ arrives.

“Data and analytics area unit already shaking up multiple industries, and therefore the effects can solely become additional pronounced as adoption reaches crucial mass.” aforesaid the McKinsey international Institute (MGI) in its government summary of last month’s report: “The Age of Analytics: competitive in an exceedingly Data-Driven World.”[3]

2016 was associate exciting year for large information with organizations developing real-world solutions with big knowledge analytics creating a serious impact on their bottom line. 2017 can see a continuation of those huge knowledge trends as technology becomes smarter with the implementation of deep learning and AI by several organizations. Growing adoption of computing, growth of IoT applications and enhanced adoption of machine learning are going to be the key to success for data-driven organizations in 2017. Here’s a sneak-peak into what huge knowledge leaders and CIO’s predict on the rising huge knowledge trends for 2017.

There area unit several roles within the huge data trade. however loosely they will be classified in 2 categories:

- Big Data Engineering

- Big Data Analytics

These fields area unit mutually beneficial however distinct.

The Big data engineering revolves round the style, deployment, feat and maintenance (storage) of an outsized quantity of information. The systems that huge data engineers’ area unit needed to style and deploy create relevant information obtainable to varied consumer-facing and internal applications. While massive information Analytics revolves round the conception of utilizing the large amounts of information from the systems designed by big data engineers. huge data analytics involves analyzing trends, patterns and developing varied classification, prediction & foretelling systems. [4]

Thus, in brief, huge information analytics involves advanced computations on the info. Whereas big data engineering involves the coming up with and readying of systems & setups on prime of that computation should be performed.

CHAPTER ONE: BIG DATA AND IOT - TWO SIDES OF THE SAME COIN?

The future of technology lies in information and its analysis. additional objects and devices area unit currently connected to the net, transmittal the knowledge they gather back for analysis. The goal is to harness this information to be told concerning patterns and trends that may be accustomed build a positive impact on our health, transportation, energy conservation, and manner. However, the info itself doesn’t turn out these objectives, however rather it’s solutions that arise from analyzing it and finding the answers we’d like. Two terms that are mentioned in respect to this future: Big Data and therefore the internet of Things (IoT); It’s onerous to speak concerning one while not the opposite, and though they’re not constant factor, the 2 practices area unit closely tangled. Let’s take a more in-depth explore the 2 practices before we tend to examine their connection:

Big Data

Big data has existed long before the IoT burst out into the scene to perform analytics; data is outlined as massive information once it demonstrates the four V’s: volume, variety, velocity, and veracity. This equates to an enormous amount of information that may be each structured and unstructured, whereas velocity refers to the speed of information process, and veracity determines its uncertainty.

The Internet of Things

The conception of IoT aims to require a large vary of “things” and switch them into good objects — something from watches to fridges, cars and train tracks. product that unremarkably wouldn’t be connected to the net and ready to acquire and method knowledge, area unit equipped with sensors and laptop chips for knowledge gathering. However, not like chips employed in PCs, smartphones, and mobile devices, these chips area unit used principally for gathering knowledge that indicates client usage patterns and products performance. The IoT is basically the means collects and sends knowledge. data from IoT devices resides in massive knowledge and is measured against it. And IoT can before long bit each facet of our lives: transportation (cars, good train tracks and traffic lights), producing, good Homes (thermostats and voice activated appliances), and in fact — trade goods like smartphones, wearables, and more.

Bringing the 2 practices along

This riotous technology needs new infrastructures, as well as hardware and software package applications, likewise as associate degree operational system; enterprises can have to be compelled to traumatize the flow of information that starts flowing in and analyze it in period of time because it grows by the minute.

That’s wherever massive data comes in; big data analytics tools area unit capable of handling lots of information transmitted from IoT devices that turn out a continual stream of data.

But simply to differentiate the 2, the IoT delivers the information from that massive data analytics will draw the data to form the insights needed of it. However, the IoT brings knowledge on a unique scale, therefore the associate degreealytics resolution ought to accommodate its desires of speedy uptake and process followed by a correct and quick extraction. Solutions like SQream Technologies deliver close to period of time analytics on huge sized datasets, and primarily condense a full-rack information into a tiny low server process up to 100TB, therefore nominal hardware is needed. following generation analytics information leverages GPU technology, permitting an excellent additional economy of the hardware, i.e. massive information within the automobile, or five TB on a laptop computer. This helps IoT corporations correlate the growing range of information sets, that helps them get period of time responses and adapt to dynamical trends, resolution the scale challenge while not compromising on the performance.

The challenges

By 2020, it’s projected that twenty.8 billion “things” are going to be used globally, because the net of Things continues its expansion; as a result, we are going to conjointly see major cybersecurity problems and safety considerations arise, as cybercriminals might probably have entered the facility grid, into traffic systems, and the other connected system that contains sensitive knowledge that may stop working cities.

Internet security platforms like Zscaler provide IoT devices protection against security breaches with a cloud based mostly resolution. you’ll be able to route the traffic through the platform and set policies for the devices so that they won’t communicate with surplus servers.

The Internet of Things and massive knowledge share a closely unwoven future. there’s little question the 2 fields can produce new opportunities and solutions which will have an extended and lasting impact. [8]

CHAPTER TWO: BIG DATA AND YOUR SECTOR IN 2017

This year we’re reaching to see businesses beginning of all sizes notice the potential of massive data. Given the wide advertised edges around client intelligence, sales chase and dealing processes to call a couple of, adoption rates of information analytics square measure growing quickly, particularly as heads of business square measure commencing to perceive its potential.

But, in managing such huge volumes of data, business house owners may be forgiven for being swamped and unsure wherever to begin. So, to help, I canvassed the views of a number of the foremost established names in business, UN agency are operating with knowledge for a few time, to know from them, what’s going to be huge in their sector this year and what alternative businesses ought to be looking for:

Retail

Jon Everitt, cluster knowledge creator at capital international, provided some attention-grabbing insights into however huge knowledge can still disrupt the retail sector, acting as Associate in Nursing progressively vital suggests that of competitive advantage. Speaking concerning the largest changes we are able to expect to visualize in 2017, Jon says that “with technology design quickly evolving, the time taken to spot credible business insight are any reduced, sanctionative larger business gracefulness and an improved expertise for retail customers. prophetical analytics also will be Brobdingnagian this year. fashionable information design is in a position to stream info in period to herald larger and additional disparate knowledge sets. The classic example being shopper behavior analysis for retail – what adverts individuals consume, web site dwell times, and click on through rates. succeeding step isn’t almost about seeing what individuals have done, however what they didn’t do, and why.”

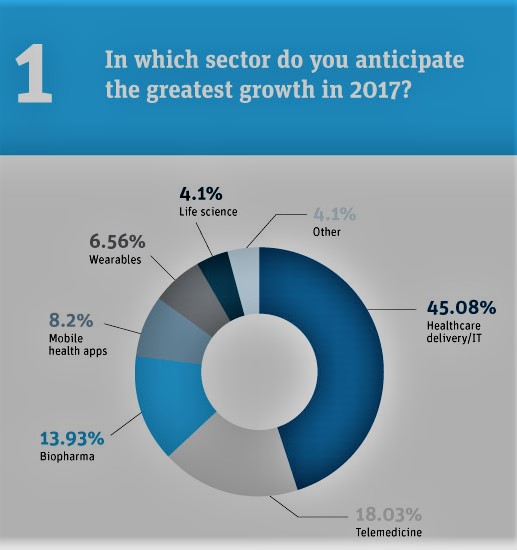

Healthcare

In 2017 and on the far side, huge knowledge can facilitate USA to assess health outcomes additional accurately – that’s in line with Daniel Ray, Director of information Science at NHS Digital. Elaborating on this, Daniel explained that huge knowledge can play Associate in Nursing progressively vital role in analyzing treatment choices and associated cancer patient survival rates next year: “Using huge knowledge, we’re ready to analyze why survival rates square measure most higher in some components of the country than others – for specific diseases it ranges from a 5-10% survival rate to a hour survival rate in numerous components of the countries. there’s lots additional we’ll be ready to do after we will link these datasets along, in terms of understanding and addressing this variation, underneath acceptable governance.”

Agriculture

From my very own purpose of read, agriculture are one in every of the largest movers within the big data trade. We’re seeing Brobdingnagian use cases in agriculture, and after you take away into the information, you’ll see that this is often turning into a awfully careful trade sector that currently commands larger intelligence and prophetical power. Everything from weather patterns to global climate change and natural disasters will produce vast impact on business outcomes in agriculture – so for those not engaging from unjust analytics, business outcomes can at the best fail to fulfill their full potential, however at the worst, might prove financially calamitous.

Telecoms

My colleague, Eliano Marques, international knowledge science apply lead, assume huge conjointly expects to visualize Brobdingnagian strides forward within the public utility sector. Specifically, around breaking silos across departments and functions with corporations getting down to, “adopt Associate in Nursing integrated huge knowledge strategy that may be ready to higher establish what would be the best-recommended action for a consumer. it’s only if telcos escape these silos that they’ll be ready to work scale with huge knowledge, sharing it effectively across the business to change additional correct predictions concerning what to try and do next with customers.”

He conjointly believes we’ll see additional ‘operationalization of advanced projects’, running production and extracting business price. “Telcos square measure huge, and that they need to be mistreatment huge knowledge on a far larger scale than they’re presently managing. Partnerships with social media giants square measure inevitable, at that purpose the quantity of information returning into the networks can reach record levels. the chance to legitimize on this level is large, however as long as telcos will manage smarter frameworks to exploit today’s huge knowledge technologies.”

Manufacturing

Finally, one in every of the sectors heatedly tipped to continue its adoption of massive knowledge is producing. one in every of the massive things that makers square measure wanting to try and do is to avoid wasting cash and cut back their prices, and currently knowledge analytics square measure serving to them to search out ways that to try and do simply that. it’s revealing problems and issues that were otherwise hidden or were found abundant later within the producing method. this is often sanctionative makers to create the mandatory changes in method, virtually period, that may lead to higher yields, lower prices, and better-quality merchandise.

2017 is predicted to be the year of massive knowledge roll out on a bigger scale, and across additional geographic markets. The implications of not doing therefore square measure merely turning into too evident, and in and of itself, square measure feeding a growing business intolerance for functioning on instinct as opposition intelligence.

CHAPTER THREE: Big Data and IoT Predictions for 2017

Big data and IoT can evolve

Big data and IoT is absolute to evolve with time and additionally, it’ll facilitate businesses bloom within the event of unsure time in various ways that. Firstly, self-service information preparation can unleash massive information potentials. what is more, embedded analytics are going to be replaced by self-service reportage in organizations. Then IoT adoption and convergence with massive information can flip machine-driven information preparation a demand. computer science adopters and machine learning in analytics is probably going to realize an enormous first-mover profit within the revolutionary business. Finally, cybersecurity is going to be the foremost essential massive information use case.

Top executives can assume an even bigger role

To improve information center potency, c-level executives can need to play larger role that would end in IT facility’s success. As information center and IT methods still have its impact on companies’ bottom line, the responsibility of high executives can increase, given the numerous quantity of information loss attributable to data center outages. so as to require up the responsibilities concerning information center success, the highest management can need to perceive the information center thought, the problems that IT managers encounter frequently, monetary limitations, and necessities of contemporary tools like automation, all that aims at rising IT.

With a deeper understanding of the information center and therefore the practicality of IT groups, the c-level executives are going to be able to contribute during a higher manner toward rising a spread of IT problems like business continuity, disaster recovery, inexperienced information center and plenty of a lot of.

Cognitive era can emerge

Cognitive era of computing can create its debut in 2017 because it can modify convergence of computer science, business intelligence, machine learning and period of time analytics during a range of how which will press on to create period of time intelligence a reality. All of this is often doable solely attributable to the impeccable performance given by hardware acceleration of in-memory information store. With the advanced system, there isn’t any got to outline in previous a schema or index as GPU accelerations permits to perform explorative analytics which will be required for psychological feature computing.

Migration of mission-critical applications to cloud can increase

While migration of previous systems and applications to innovative encrypted approaches were thought-about a little too fragile, migration to cloud servers can increase considerably in 2017 says specialists. not solely customer-facing applications or websites like eCommerce on supported cloud server, however even different massive enterprises square measure creating its thanks to cloud. Cloud-based technology is that the way forward for the IT world because it modifies organizations to become a lot of agile, whereas conjointly offers extensive cost-savings.[7]

REVIEWS BY EXPERTS

1. Blockchain transforms choose monetary service applications. In 1998, Nick Szabo wrote a brief paper entitled “The God Protocol.” Szabo mused concerning the creation of a be-all goal technology protocol, one that selected God the trustworthy third party within the middle of all transactions. This trust protocol provides a world distributed ledger that changes the approach knowledge is hold on and transactions area unit processed. The blockchain runs on computers distributed worldwide wherever the chains may be viewed by anyone. Transactions area unit hold on in blocks wherever every block refers to the preceding block, blocks area unit time-stamped—storing the information in a very kind that can’t be altered. Hackers realize it not possible to hack the blockchain since the planet has read of the whole blockchain. Blockchain provides obvious potency for customers. for instance, customers will not need to anticipate that SWIFT group action or worry concerning the impact of a central datacenter leak. For enterprises, blockchain presents a price savings and chance for competitive advantage. In 2017, there’ll be choose, transformational use cases in monetary services that emerge with broad implications for the approach data is hold on and transactions processed. – John Schroeder, govt chairman and founder, MapR

2. C-level executives can assume a bigger role within the knowledge center’s success in 2017. this may occur as each knowledge centers ANd IT ways still have a bigger impact on an enterprise’s bottom line—a trend we have a tendency to saw in 2016, proven by firms losing millions following knowledge center outages. to require on a bigger role in knowledge center success, the C-suite can need to learn to talk constant language because it, perceive the problems that IT habitually faces, budget constraints, and also the necessity of advanced tools like automation inside the IT environment—all with the goal of serving to to handle IT’s desires. Equipped, for the primary time, of a deeper mental object and operational understanding of the information center and the way IT groups operate, the C-suite are going to be in a very position to assist improve a large array of problems, together with disaster recovery ways, business continuity, ways in which to modify manual or tedious processes, and inexperienced knowledge center initiatives. – Jeff Klaus, GM, knowledge Center Solutions, Intel

3. Massive information and IoT systems can evolve in 2017 to assist businesses prosper throughout unsure times in 5 ways in which. Self-service knowledge homework can unlock massive knowledge’s full value; organizations can replace self-service reportage with embedded analytics; IoT’s adoption and convergence with massive knowledge can create machine-driven knowledge onboarding a requirement; 2017’s early adopters of AI and machine learning in analytics can gain a large first-mover advantage within the digitalisation of business; and cybersecurity are going to be the foremost distinguished massive data use case. – Quentin Gallivan, chief operating officer of Pentaho

4. Spark still isn’t contending out as a technology. Spark can evolve into one thing that’s quite totally different from what it’s nowadays. they’re going to need to address integration with persistent storage and sharing. – Archangel Stonebraker, co-founder and CTO of Tamr, and recipient of the 2014 A.M. mathematician Award

5. the net of Things creator role can eclipse the information man of science because the most beneficial imaginary creature for 60 minutes departments. The surge in IoT can manufacture a surge in edge computing and IoT operational style. Thousands of resumes are going to be updated long. in addition, fewer than 100% of firms notice they have AN IoT analytics creator, a definite species from IoT System creator. package architects UN agency will style each distributed and central analytics for IoT can soar in price. – Dan Graham, web of Things technical selling specialist, Teradata

6. The psychological feature era of computing can create its debut. The psychological feature era of computing can create it potential to converge AI, business intelligence, machine learning and time period analytics in varied ways in which can create time period intelligence a reality. Such “speed of thought” analyses wouldn’t be potential were it not for the unexampled performance afforded by hardware acceleration of in-memory knowledge stores. By delivering extraordinary performance while not the requirement to outline a schema or index beforehand, GPU acceleration provides the flexibility to perform searching analytics that may be needed for psychological feature computing. – Eric Mizell, VP of worldwide solutions engineering, Kinetica

7. data makes a management move. as long as the quantity of knowledge within the world doubles each a pair of years, knowledge has to be managed way more effectively on the enterprise finish of the information consumption chain. data is that the knowledge concerning our knowledge, like once a file was created, once it had been last opened, what application uses it, then forth. because it begins to be ready to finally see specifically what knowledge is cold, and what’s hot, it’ll get a lot of easier to align knowledge to the various storage resources offered to enterprises nowadays. The result is going to be a lot of less overspending and far additional optimisation for firms UN agency begin to manage by the intelligence offered in their data. – Lance Smith, chief operating officer of Primary knowledge

8. In 2017, organizations can stop belongings knowledge lakes be their proverbial ball and chain. Centralized knowledge stores still have an area in initiatives of the future: however else are you able to compare current knowledge with historical knowledge to spot trends and patterns? however, relying exclusively on a centralized knowledge strategy can guarantee knowledge weighs you down. instead of an information lake-focused approach, organizations can begin to shift the majority of their investments to implementing solutions that modify knowledge to be utilized wherever it’s generated and wherever business method occur—at the sting. In years to return, this shift are going to be understood as particularly discerning, currently that edge analytics and distributed ways are getting progressively vital components of etymologizing price from knowledge. – Adam Wray, chief operating officer of Basho

9. 2017 are going to be the year organizations begin to rekindle trust in their knowledge lakes. The “dump it within the knowledge lake” mentality compromises analysis and sows distrust within the knowledge. With such a big amount of new and evolving knowledge sources like sensors and connected devices, organizations should be argus-eyed concerning the integrity of their knowledge and expect and arrange for normal, unforeseen changes to the format of their incoming knowledge. Next year, organizations can begin to alter their mind-set and appearance for tactics to perpetually monitor and sanitize knowledge because it arrives, before it reaches its destination. – Girish Pancha, Chief Operating Officer and Founder, StreamSets

10. Digital transformation drives next wave of cloud. Enterprise-level internal resources together with business-critical applications area unit currently being rapt to the cloud. this can be a replacement development as internal-facing applications area unit historically unbroken internal. The challenge with migrating recent systems and applications to a more recent encrypted approach is that the network capabilities may be stretched skinny or become too fragile. This ultimately creates complexities tied to application coming up with, performance observance and final migration to the cloud. Digital transformation isn’t a cult and that we expect to examine the migration of essential applications to the cloud increase in 2017 across all markets. massive enterprise clouds area unit currently being adopted on the far side simply customer-facing resources like e-commerce websites. Having visibility is very important so as to know however things area unit being influenced as organizations amendment the digital landscape. Cloud-only and internet-only transport area unit the long run as they permit enterprise organizations to become additional nimble and agile, whereas conjointly providing value savings. – Sean Applegate, Senior Director, Technology Deviser at bottom[9]

CHAPTER FOUR: LIVE PROJECT-SENTIMENT ANALYSIS OF TWITTER DATA

Abstract

Twitter, one amongst the most important social media web site receives tweets in millions a day. This Brobdingnagian quantity of information will be used for industrial or business purpose by organizing in line with our demand and process. This paper provides how of sentiment analysis victimization hadoop which can method the massive quantity of data on a hadoop cluster quicker in real time.

Keywords—Sentiment analysis, Streaming API’s, Opennlp, Wordnet

INTRODUCTION

Today, the matter information on the web is growing at a fast pace. Different industries try to use this Brobdingnagian matter information for extracting the people’s views towards their merchandise. Social media may be a very important supply of knowledge during this case. It’s not possible to manually analyze the big quantity of information. This is often wherever the requirement of automatic categorization becomes apparent. Subjective information is analyzed typically during this case. There are unit an outsized range of social media websites that modify users to contribute, modify and grade the content. Users have a chance to precise their personal opinions regarding specific topics. the instance of such websites includes blogs, forums, product reviews sites, and social networks. during this case, twitter information is employed. Sites like twitter contain prevalently short comments, like standing messages on social networks like twitter or article reviews on Digg. to boot, several websites enable rating the recognition of the messages which might be associated with the opinion expressed by the author. the main target of our project is to assign the polarity to every tweet I.e. whether or not the author categorical positive or negative opinion.

HADOOP

The Hadoop platform was designed to resolve issues that had ton of information for process. It uses the divide and rule methodology for process. It’s accustomed handle giant and complicated unstructured information that doesn’t match into tables. Twitter information being comparatively unstructured will be best keep victimization Hadoop. Hadoop conjointly finds lots of applications within the field of on-line merchandising, search engines, finance domain for risk analysis etc. HDFS Hadoop Distributed classification system (HDFS) may be a distributed classification system that runs on artefact machines. it’s extremely fault tolerant and is meant for low value machines. HDFS includes a high turnout access to application and is appropriate for applications with great amount of knowledge. HDFS includes a one master server design that includes a single namenode that regulates the filesystem access. Datanodes handle scan and write requests from the filesystem’s shoppers. They conjointly perform block creation, deletion, and replication upon instruction from the Namenode. Replication of knowledge within the filesystem adds to the information integrity and therefore the lustiness of the system. information replication is completed for achieving fault tolerance. the big information cluster is keep as a sequence of blocks. Block size and therefore the replication issue area unit configurable. Replication issue is ready three in our project which implies 3 copies of constant information block are maintained at a time within the cluster.

APPROACH

In our approach, we have a tendency to targeted additional on the speed of acting analysis than its accuracy i.e. acting sentiment analysis on huge information that is achieved by cacophonic the assorted modules of knowledge in following steps and collaborating with hadoop for mapping it onto completely different machines. a part of speech labelled victimization opennlp. This tagging is employed for following numerous functions.

1) Stop words removal: The stop words sort of a, an, this that aren’t helpful in acting the sentiment analysis area unit removed during this section. Stop words area unit labelled as insecticide in Opennlp. All the words having this tag aren’t thought-about.

2) Unstructured to structured: Twitter comments area unit principally unstructured i.e. ‘aswm’ is written ‘awesome’, ‘happyyyyyy’ to really ‘happy’. Conversion to structured is completed by dynamic information records of unstructured to structured and vowels adding.

3) Emoticons: These are unit most communicative technique offered for opinion. The emoticons symbol is reborn in to words at this stage i.e. ¬ to happy [2]

A. REAL TIME DATA AND FEATURES

The real time that’s necessary for this project is obtained from the streaming API’s provided by twitter. For the event purpose twitter provides streaming API’s that permits the developer associate access to a quarter of tweets tweeted at that point bases on the actual keyword. the thing regarding that we would like to perform sentiment analysis is submitted to the twitter API’s that will any mining and provides the tweets associated with solely that object. Twitter information is usually unstructured i.e use of abbreviations is incredibly high. A tweet consists of most a hundred and forty characters. conjointly it permits the employment of emoticons that area unit direct indicators of the author’s read on the topic. Tweet messages conjointly encompass a timestamp and therefore the user name. This timestamp is helpful for estimate the long run trend application of our project. User location if offered may facilitate to measure the trends in several countries. [1]

B. PART OF SPEECH

The files that contained the obtained tweets square measure then

C. ROOT FORM

The given words in tweet square measure reborn to their root type to avoid the unwanted additional storage of the derived word’s sentiment. the basis type lexicon is employed to try and do that that is created native because it is heavily used is program. This lowers the interval and will increase the potency of the system.

D. SENTIMENT DIRECTORY

The sentiment Directory is formed victimisation commonplace information from sentimwordnet and victimisation all potential usage of a specific word i.e. “good” are often utilized in many various ways that every means having its own sentiment price anytime it’s used. Therefore, overall sentiment of fine is obtained from all its usage and keep in a very directory that ought to be once more native to the program (i.e. in primary memory) so time shouldn’t be wasted in looking word within the secondary memory storage.

E. MAP-REDUCE ALGORITHM

The quicker real-time process are often obtained by victimisation cluster design created by hadoop. The program contains in chains map-reduce structure that accustomed method ever tweets and assign the sentiment to every remaining words of tweet then summing it up to make your mind up final sentiment. Here special care ought to be taken for the construction sentences wherever sentiment of phrase matters instead of sentiment of every word. It is often done by dynamic directory of phrases and their sentiment values are often obtained from commonplace algorithmic program PMI-IR.[1]

TIME EFFICIENCY

Time potency is a vital side wherever our project scores well. Lower reaction time has achieved by use of data structures as native variables. This reduces the access time from a hard-disk. Additionally, the employment of Hadoop ensures the distributed process and it additionally lowers the interval. Hence overall the time potency will increase because of the above-mentioned factors.

CONCLUSION

Sentiment analysis could be a terribly wide branch for analysis. we’ve got lined a number of the vital aspects. We tend to arrange ahead to enhance our algorithmic program used for determinative the sentiment price. Also, the project as of currently can even be dilated to alternative social media platform usages like motion picture reviews (IMDB reviews), personal blogs. Emoticons and therefore the use of hashtags for the sentiment analysis could be a vital illation associated with sentiment analysis of social media information. Our project uses emoticons however the employment of hashtags to see the context of the tweet isn’t done. Therefore, with the present limitations the accuracy is found to be 72.27 %.

REFERENCES

[1] Real Time Sentiment Analysis of Twitter Data Using Hadoop Sunil B. Mane, Yashwant Sawant, Saif Kazi, Vaibhav Shinde College of Engineering, Pune

[2] Theresa Wilson, Joanna Moore, Efthymios Kouloumpis,“Twitter Sentiment Analysis – The Good, the Bad and the OMG”

[3] Apoorv Agarwal, Owen Rambow, Rebecca Passonneau, “Sentiment Analysis of Twitter Data”

[4] Bo Pang, Lillian Lee, “Opinion Mining and Sentiment Analysis”

[5] Andrea Esuli, Fabrizio Sebastiani, “SENTIWORDNET: A Publicly Available Lexical Resource for Opinion Mining”

[6] Bing Liu, Minquing hu, “Mining and summarizing Customer Reviews” 7. Nitin Jindal, Bing Liu, “Opinion and Spam analysis”

[7] https://www.mytechlogy.com/IT-blogs/15070/big-data-and-iot-predictions-2017/#.WXRiA4iGO00

[9] http://www.dbta.com/Editorial/News-Flashes/10-Big-Data-and-IoT-Predictions-for-2017-115079.aspx

Cite This Work

To export a reference to this article please select a referencing stye below:

Related Services

View allRelated Content

All TagsContent relating to: "Internet of Things"

Internet of Things (IoT) is a term used to describe a network of objects connected via the internet. The objects within this network have the ability to share data with each other without the need for human input.

Related Articles

DMCA / Removal Request

If you are the original writer of this dissertation and no longer wish to have your work published on the UKDiss.com website then please: